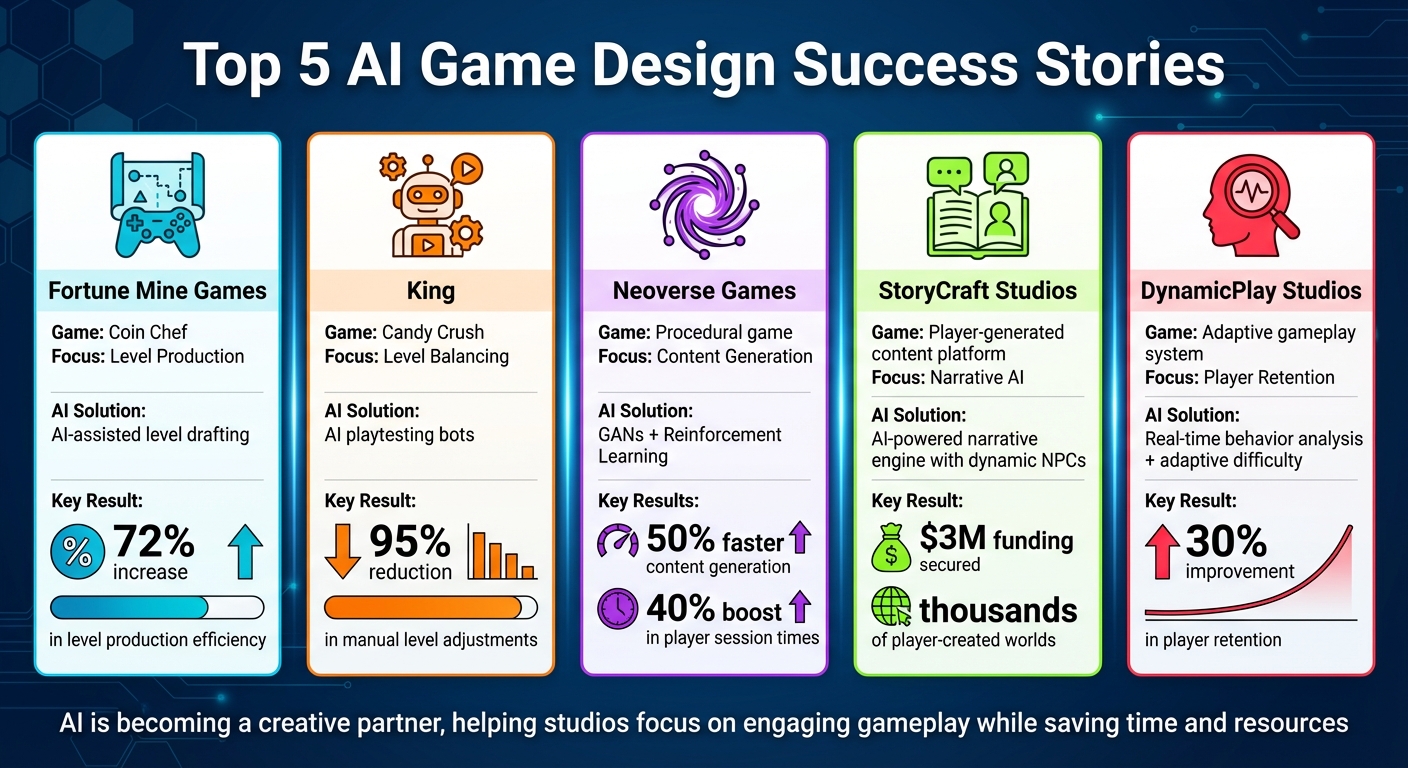

AI is reshaping game development by accelerating workflows, improving player engagement, and introducing dynamic systems that were once unimaginable. From level design to narrative creation, studios are using AI to automate repetitive tasks and refine gameplay experiences. Here’s a quick look at five standout examples:

- Fortune Mine Games: Increased level production efficiency by 72% for Coin Chef through AI-assisted level drafting.

- King: Reduced manual level adjustments by 95% in Candy Crush using AI playtesting bots.

- Neoverse Games: Achieved 50% faster content generation with AI-driven procedural level design, boosting player session times by 40%.

- StoryCraft Studios: Enabled players to create thousands of worlds and dynamic NPCs with AI-powered narrative tools, securing $3M in funding.

- DynamicPlay Studios: Improved player retention by 30% with AI systems that analyze behavior and adjust difficulty in real time.

AI isn’t replacing developers - it’s becoming a partner, helping studios focus on what makes games engaging while saving time and resources. Below, we dive into how these innovations are transforming the industry.

AI Game Design Success Stories: 5 Studios' Results Comparison

Neoverse Games: AI-Powered Procedural Content Generation

Problems in Procedural Content Creation

Procedural content creation has long relied on algorithms like Perlin Noise, which excel at filling spaces with geometry but often result in environments that feel random and disconnected. This lack of cohesion makes it hard to create immersive experiences. The bigger hurdle? Personalization. Static, pre-designed levels can't adapt to individual players' skills or play styles, leaving modern gamers wanting more tailored experiences. Trying to manually create enough variations to satisfy these expectations would demand an enormous team and budget - neither of which is practical for most studios.

AI Implementation and Results

Neoverse Games adopted "Smart AI" level design, utilizing GANs (Generative Adversarial Networks) and Reinforcement Learning to refine their approach to gameplay structure. Instead of merely generating terrain, their AI focuses on gameplay mechanics first - designing keys, locks, and progression loops. This method ensures that every environment feels purposeful, with cyclic dungeon generation solving the issue of fragmented and impersonal level design.

To further enhance their process, the studio incorporated Wave Function Collapse (WFC), a constraint-based method that ensures procedurally generated maps are always connected and functional. This completely resolved the problem of broken paths. Additionally, their AI tracks player behavior in real time - monitoring movement patterns, attack frequency, and death locations. This data allows the system to dynamically adjust both the difficulty and layout of the environment to match the player's actions [10, 11].

The results speak for themselves. Content generation became 50% faster, and players spent 40% more time engaged in each session. Other studios using similar AI-driven systems have also seen significant benefits, reporting time savings of up to 40% during the pre-visualization phase. Designers now act as curators, using AI to interpret narrative goals, mood, and pacing, rather than manually constructing every detail.

sbb-itb-a759a2a

StoryCraft Studios: AI-Powered Narrative Engine

Building Narrative Depth with AI

StoryCraft Studios tackled a major challenge in gaming: how to create personalized, engaging stories without requiring massive budgets or large development teams. Traditional game narratives rely heavily on manual writing and coding, which limits their scope. To overcome this, StoryCraft embraced Player Generated Content (PGC) powered by AI.

Their narrative engine allows players to transform simple text descriptions into fully realized in-game assets. For instance, players can describe various game elements, and the AI instantly generates detailed versions of those elements. But the game-changing feature lies in their dynamic NPCs (non-playable characters). These aren't your typical scripted characters with fixed dialogue trees. Instead, they're AI-driven personalities with unique appearances and evolving traits. Players can have unscripted conversations with these NPCs, forming relationships that grow into friendships, rivalries, or even partnerships.

The AI also acts as a creative collaborator, stepping in when players hit a creative block. NPCs actively request tasks or items, sparking new ideas and interactions. StoryCraft calls this an "endless well of inspiration". By applying principles of narratology, they ensure that the stories remain emotionally engaging and cohesive, avoiding the disconnected feel often associated with procedurally generated content. This approach has created a richer narrative experience, drawing players deeper into their crafted worlds.

Impact on Player Engagement

These innovations have had a profound effect on player engagement. Since the open alpha launch, players have created thousands of worlds and generated hundreds of thousands of characters, with some spending hundreds of hours exploring different genres. The studio's success has also caught the attention of investors, securing $3 million in seed funding from Khosla Ventures, following an earlier $2 million pre-seed round.

Vinod Khosla remarked: "AI enables anyone to be both a consumer and a creator. Storycraft introduces a new kind of social gameplay, where players can build their own characters and interact with them in their own digital worlds".

CEO Andy Mauro highlighted the platform's creative potential: "Our early players have shown us that creating characters, worlds, and stories with AI is so much more than just a better version of a community crafting game. It's actually reigniting a creative spark players thought had gone out".

DynamicPlay Studios: Adaptive Difficulty and Scenario Generation

AI for Real-Time Player Behavior Analysis

DynamicPlay Studios has taken a bold step in advancing gameplay with real-time adaptive systems. By leveraging telemetry data, the studio tracks key player behaviors such as movement, combat accuracy, deaths, exploration habits, and in-game spending. This data allows the AI to classify players into categories like Explorers, Achievers, Combat Players, or Social Players. Using this classification, the studio developed a system called "Runtime World Mutation", which adjusts the game environment dynamically based on player preferences. For instance, a stealth-focused player might encounter more cover-based areas, while a combat-oriented player faces challenges tailored to their playstyle. This process operates on a continuous "Observe → Analyze → Predict → Adapt" loop, seamlessly running in the background without disrupting gameplay.

The system employs what the developers call "invisible adaptation." The idea is to subtly adjust the game without making it obvious to the player, preserving immersion and engagement.

Results and Player Retention Impact

These adaptive gameplay features have had a measurable impact on player retention and revenue. By customizing challenges to suit individual skill levels, DynamicPlay Studios achieved a 30% increase in player retention. A related study conducted in June 2025 by Yurii Sulyma, Lead Unity Developer at Cubic Games, explored the use of dynamic difficulty algorithms. His team implemented a system that gradually reduced difficulty for "at-risk" players on a nightly basis. The results? A 3-percentage-point boost in 30-day retention and an $0.08 increase in lifetime value (LTV) per user. On average, players stayed for one additional day and completed ten more game rounds per month.

"Traditional static difficulty curves give rise to the 'difficulty paradox' - boredom or frustration that accelerates churn", explained Yurii Sulyma.

Further studies using machine learning models for Dynamic Difficulty Adjustment (DDA) revealed even more promising results. These models improved player retention by up to 20%. The financial upside was significant as well, with 79% of the increased revenue coming from in-app purchases and 21% from advertising. Predictive modeling, which anticipates when a player might lose interest or quit, not only enhances the player experience but also delivers real business value.

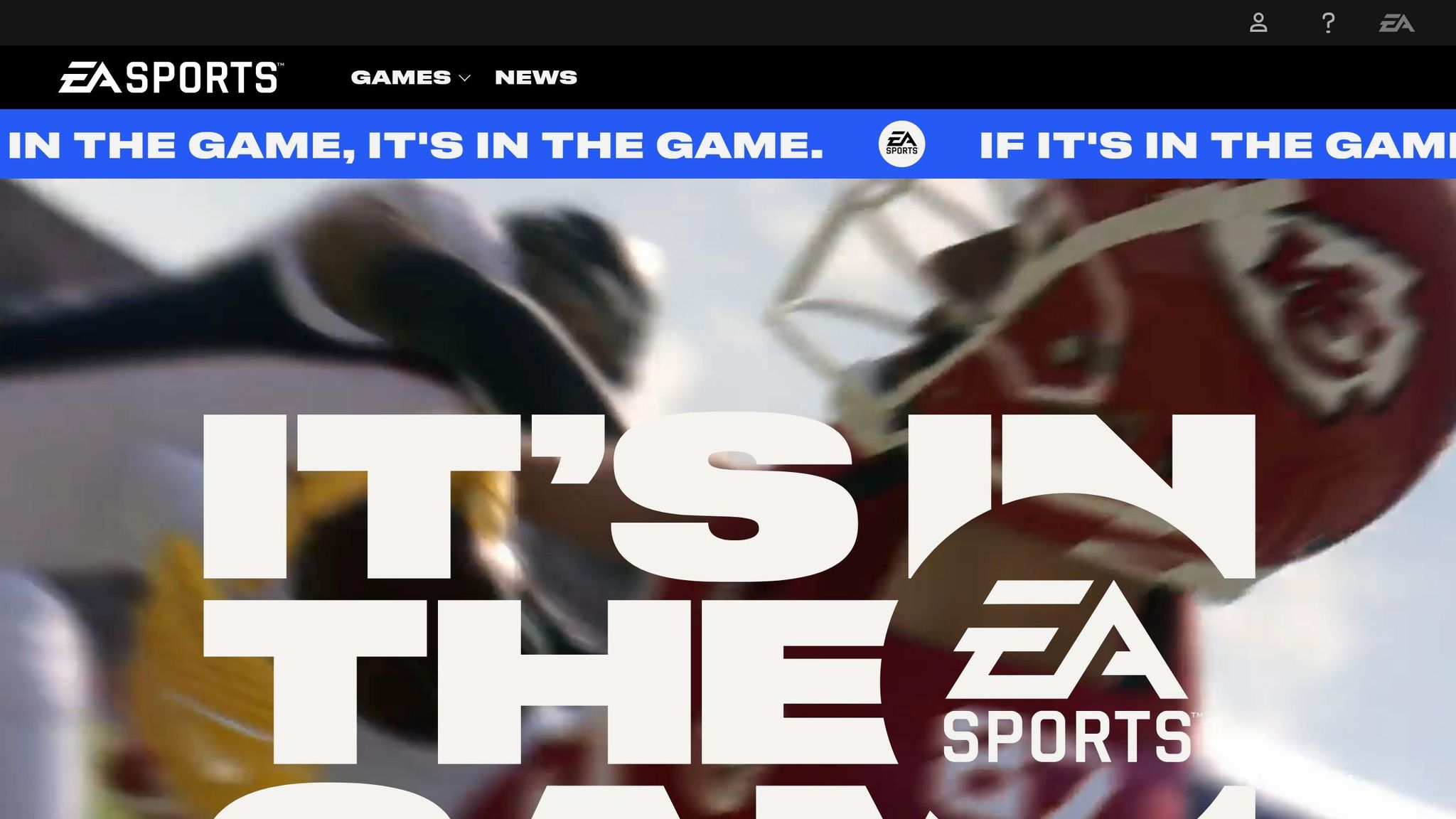

EA Sports: HyperMotion Technology in FIFA 22

AI in Animation and Real-World Data Capture

When developing FIFA 22, EA Sports reimagined its approach to motion capture. Instead of the usual setup of recording a few players in a controlled studio, they captured 22 professional footballers playing a full-intensity, 90-minute match. This method allowed the team to gather authentic gameplay data like never before.

To achieve this, players wore Xsens suits, which recorded every touch, tackle, and sprint. However, these suits couldn't track absolute positions on the field. EA solved this challenge by creating a custom Local Positioning System (LPS) using stadium beacons and chest sensors.

"We'd know where players' joints were in relation to each other, but not relative to a point in space. EA's own local positioning system has been a game-changer for FIFA 22."

- Gareth Eaves, Senior Animation Director at EA

The real leap forward came with the ML‑Flow algorithm. Trained on 8.7 million frames of data, this algorithm generates animations on the fly, factoring in player angle, speed, and cadence. As Lead Gameplay Producer Sam Rivera explained, "What that algorithm is doing is learning from all the data for that motion capture shoot... then it creates that solution, it creates the animation in real time. This is basically the beginning of machine learning taking over animation."

HyperMotion technology bridges the gap between responsiveness and realism by seamlessly transitioning between short, sharp movements and longer, more natural animations mid-stride.

Benefits to Gameplay and Development

FIFA 22 introduced over 4,000 new animations thanks to HyperMotion data, leading to smoother transitions, more lifelike player interactions, and better tactical coordination.

"In the past, we used to prioritize short animations, so the game is responsive... With access to HyperMotion, we're able to put in there longer animations... but the technology allows us to transition in the middle of the animation to a different type of animation, if the situation changes."

- Sam Rivera, Lead Gameplay Producer at EA

Team cohesion also saw a major upgrade. Previously, AI-controlled players operated in small groups of two or three. HyperMotion technology enables entire defensive lines to maintain their shape and move as a unit, creating gameplay patterns that feel more true to life. After three years of development, FIFA 22 achieved a new level of visual and gameplay depth.

Scopely: AI-Optimized Live Operations for Dynamic Worlds

AI for Personalization in Live Games

Scopely's Playgami™ platform takes personalization in gaming to the next level by tailoring gameplay in real time. This proprietary tech relies on advanced analytics and machine learning to create what the company calls "directed-by-consumer™" gameplay. Essentially, the game adapts to each player's behavior, delivering a uniquely tailored experience.

The development of this platform is a collaborative effort. Machine Learning Engineers focus on automating live operations, streamlining game updates and management. At the same time, Data Analysts, Economy Designers, and Product Managers work together to conduct A/B tests, tweaking in-game rewards and pricing strategies to suit individual player preferences. On the marketing side, generative AI tools are employed to quickly develop and test advertising materials for games like Star Trek Fleet Command, enabling rapid creative iterations.

"As we have expanded the portfolio of titles that we are operating, our teams are learning faster. We have better understanding of players, our talent density and the type of people that are working at the company have gotten more exciting over time."

- Javier Ferreira, Co-CEO, Scopely

On the technical front, Scopely's AI infrastructure uses frameworks like TensorFlow and PyTorch to create predictive models. These models anticipate player behavior and optimize game mechanics, making the experience more engaging. This robust setup has proven invaluable, particularly after Scopely's acquisition of Niantic’s game business, including Pokémon GO, for $3.5 billion in 2025. This acquisition boosted their ability to manage dynamic, real-world integrated environments. With such a strong foundation, Scopely has positioned itself to drive both player engagement and revenue growth.

Results in Engagement and Monetization

The impact of Scopely's AI-driven systems is clear in their performance metrics. Monopoly GO! set a record as the fastest mobile game to hit $3 billion in revenue by 2025, eventually doubling that to $6 billion. Impressively, it achieved $1 billion in revenue in its very first year. Another standout, Star Trek Fleet Command, brought in over $50 million in revenue within just four months of its release.

These numbers highlight how Scopely's blend of AI and live operations creates not only engaging games but also extraordinary financial success.

Lessons from AI Game Design Success Stories

Comparison Across Case Studies

These five case studies highlight how AI shines when it takes over repetitive tasks, freeing up developers to focus on the creative aspects of game design. Studios that saw the most impressive results - like Games United's 240% productivity boost or LBC Studios' ability to produce character art 8x faster - followed a similar approach. They trained AI systems using their own high-quality assets to maintain a consistent visual style, then used AI to draft content that artists polished manually.

This shift - from creating content entirely by hand to refining AI-generated drafts - has been a game-changer. For instance, King was able to cut manual level adjustments by 95% by deploying playtesting bots, while Ubisoft sped up narrative production by using AI to generate variations of NPC dialogue. Across the board, the key takeaway is clear: AI speeds up the drafting process, allowing teams to focus more on the elements that make games enjoyable.

| Studio | Primary Challenge | AI Solution | Key Result |

|---|---|---|---|

| King | Tedious level balancing | Playtesting bots | 95% fewer manual tweaks, 50% faster refinement |

| Games United | Scaling visual assets | Style training & asset recycling | 240% productivity increase |

| LBC Studios | High character art costs | Custom style training | 8x faster production (320 hrs → 40 hrs) |

| Fortune Mine | High demand for levels | AI-powered drafting | 72% faster level design |

| Ubisoft | Repetitive NPC dialogue | LLM data augmentation | 30:1 input-to-output ratio |

These examples offer developers clear strategies for leveraging AI effectively.

Practical Advice for Game Developers

Based on these success stories, developers should aim to use AI in ways that enhance their creative processes. Hosting internal hackathons focused on specific tasks - like creating UI elements or background art variations - can help identify which AI tools work best within your workflow. The goal is to integrate AI into the drafting phase - tasks like initial sketches, coloring, or generating dialogue variations - while leaving the final touches to your team to ensure quality.

One critical step is to train AI on your own assets to preserve your game's unique aesthetic. For instance, Games United used just six hand-drawn maps to generate entirely new environments in a single week. Similarly, Ace Games saved 120 hours every month by training AI on their seasonal content. Exporting AI-generated outputs as PSD files allows artists to enhance them further in Photoshop.

For procedural content, define "fitness functions" - clear criteria that measure success, like the number of steps required to solve a puzzle. This ensures that AI-generated content is both playable and well-balanced. In narrative design, adopt a "writer-in-the-loop" approach. This method lets AI create diverse NPC dialogue options, enabling writers to focus on refining the text rather than starting from scratch.

"Writers often talk about having an editing brain and a writing brain... if you can jump to the editing step, that's huge."

- Ben Swanson, Ubisoft

AI Innovation for Game Experiences: From Research to Prototyping (Presented by Microsoft)

Conclusion

AI has grown beyond being just a tool for automation - it's now a creative partner that takes care of repetitive tasks, giving developers more time to focus on building immersive experiences. For example, King's AI playtesting bot slashed manual level adjustments by 95% and cut refinement time by half. LBC Studios saw an eightfold jump in character asset production, while Fortune Mine Games improved level production efficiency by 72% using custom style training. InnoGames even managed to streamline live-service game maintenance for Sunrise Village, reducing their team size from 25 to just 2–4 members. These examples clearly show how AI is reshaping game development workflows.

"We are no longer just using AI to fill space. We're using it to think with us." - Anastasia Opara, Technical Designer, Epic Games

For developers ready to explore AI, the first steps are simple: start small, organize hackathons, and train AI with your own assets to keep your unique style intact. The aim isn't to replace human creativity but to enhance it - allowing teams to focus on designing experiences that truly captivate players.

FAQs

What game design tasks benefit most from AI?

AI shines in areas such as level and world generation, procedural content creation, and automating elements like assets, enemy behaviors, and narratives. It allows for the quick development of dynamic and adaptable environments, cutting both time and costs. By taking care of complex tasks, AI frees developers to concentrate on more creative aspects of game design. In fact, efficiency can increase by as much as 72% in certain scenarios. Environment design and content variation are prime examples of where AI-driven tools make a significant impact.

How do studios keep a consistent art style with AI?

Studios keep an art style consistent when using AI by relying on reference images or style guides to direct the creation of assets. They often train custom AI models using carefully selected visuals, ensuring a unified look across characters, props, and environments. To further strengthen this cohesion, teams use clear documentation, maintain open communication, and craft style-specific prompts. This approach allows AI tools to speed up asset creation while staying true to the intended aesthetic.

How does real-time difficulty adaptation avoid feeling unfair?

Real-time difficulty adjustment helps maintain balance in gameplay by tailoring challenges to the player's skill level. This dynamic system keeps the experience engaging, avoiding frustration from overly tough obstacles or boredom from tasks that are too easy. It ensures that players of all skill levels can enjoy a fair and satisfying gaming experience.