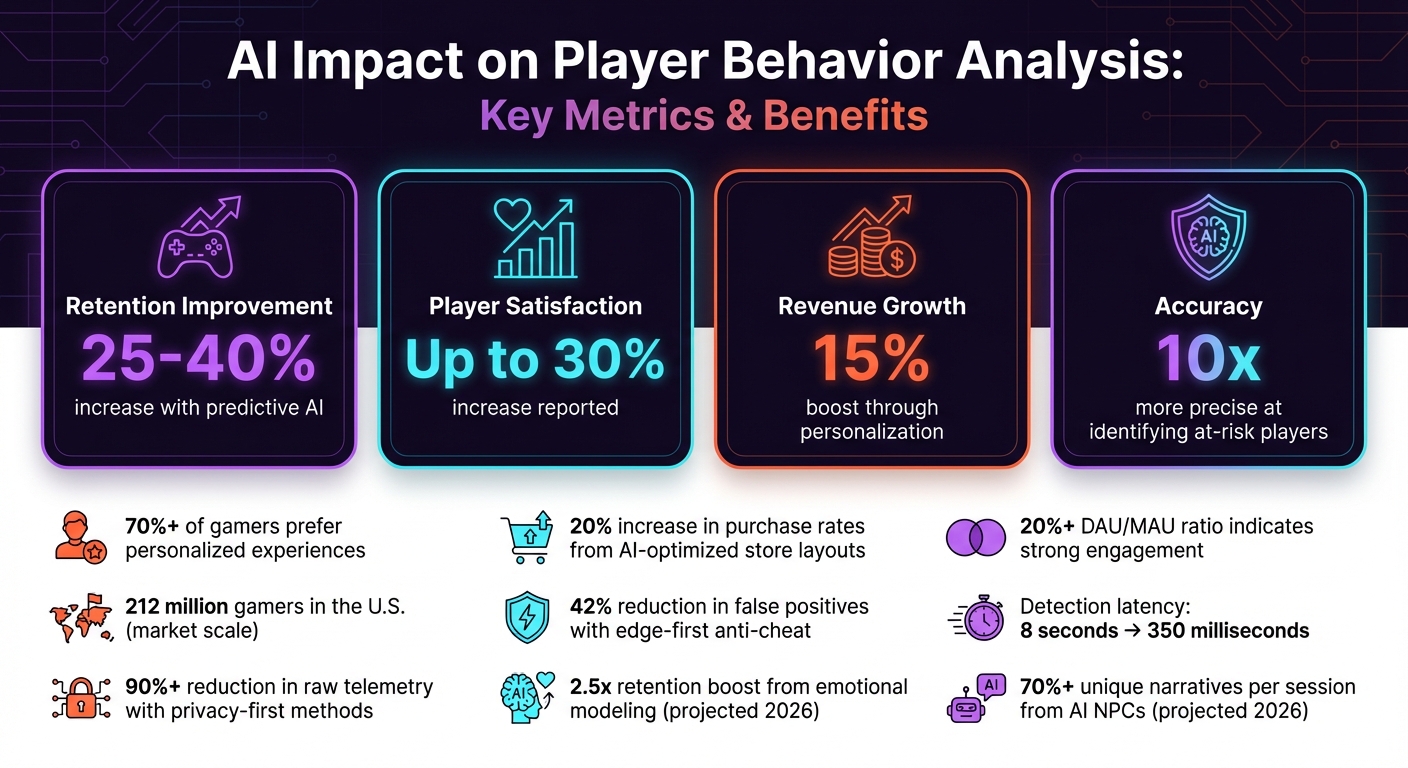

AI is transforming how games analyze and respond to player behavior. Developers now use predictive AI to anticipate actions, improve engagement, and reduce churn. This approach has led to measurable benefits:

- Retention: Predictive AI can improve retention rates by 25–40%.

- Player Satisfaction: Increases of up to 30% are reported.

- Revenue Growth: Revenue boosts of 15% are achievable with better personalization and monetization strategies.

- Accuracy: Identifying at-risk players is now 10x more precise.

Key practices include accurate data collection, privacy compliance, and using advanced algorithms like RNNs or LSTMs. AI also enhances gameplay by adjusting difficulty, recommending content, and detecting anomalies like cheating. Continuous model updates ensure relevance as player behavior evolves.

To succeed with AI, focus on clean data, privacy, fairness, and regular testing. Done right, AI can create engaging, personalized gaming experiences while balancing player trust and business goals.

AI Impact on Gaming: Key Performance Metrics and Benefits

Machine Learning Analysis of Player Behaviour in Tomb Raider: Underworld | AI and Games #31

sbb-itb-a759a2a

Data Collection and Preparation

For predictive modeling to work effectively, it all starts with solid data. The quality of your data collection process directly impacts the accuracy of AI-driven predictions or personalized experiences. Raw telemetry data often comes with issues like duplicates, cryptic codes, or outdated events. Without proper preparation, even the most advanced AI models can deliver unreliable results.

Ensuring Data Accuracy and Completeness

To get the most out of your data, standardize event naming conventions before you even begin collecting it. Use natural language for display names and include detailed descriptions that explain why the event matters, not just when it happens. For instance, naming an event "Category Selected" is far more intuitive than something like catSelectClick. Clear, contextual event descriptions help AI models perform better.

Another key step is identifying and merging semantic duplicates. For example, events labeled played song and song played might look different to developers but can confuse AI systems, leading to conflicting results. Consolidate these into a single format. Similarly, replace cryptic codes like sku_29881 with descriptive labels such as "Women's Running Shoe" so the AI can easily understand the context.

Eliminate unnecessary noise by removing stale, test, or deprecated events. Automate these cleanup tasks to spot events that haven’t been triggered in months. Platforms like Firebase allow up to 500 unique event types and 25 parameters per event, but cluttered taxonomies can lower AI confidence and increase errors.

Session timeouts should also be tailored to your game type. Casual games often use a 30-minute timeout, while more intensive games might need 60 minutes or longer to prevent inflated session counts. To avoid in-game lag, use asynchronous data handling and batch writes for telemetry processes. A stickiness ratio (DAU/MAU) above 20% is often a strong indicator of player engagement.

Once your data is cleaned and organized, the next step is ensuring it aligns with privacy and security standards.

Compliance with Privacy Regulations

After refining your data, focus on meeting privacy regulations to maintain player trust and ensure security. Laws like GDPR and CCPA require careful handling of player data. Limit data collection to only what’s necessary for your business operations. Often, session patterns and progress markers are enough to personalize experiences without requiring sensitive identity data. As Lumenalta explains:

Data minimization helps because you can personalize effectively with session patterns and progress markers, not sensitive identity data.

Different types of data require different legal bases. For example, gameplay data for core functionality might fall under "contract performance", while more detailed behavioral analytics for monetization often need explicit consent. If your game targets younger players, ensure compliance with laws like COPPA (US) and GDPR, which require strict age verification and verifiable parental consent for children.

To protect player data, encrypt everything - both at rest (using tools like AWS KMS) and in transit. Offer granular privacy settings that let players control what information they share, such as keeping achievements public but location data private. Non-compliance with regulations like GDPR can result in fines of up to €20 million or 4% of global annual revenue, so this isn’t something to overlook.

AI can also assist in monitoring compliance. Use it to classify third-party trackers and detect unexpected changes in vendor scripts. Before rolling out AI-driven personalization, test models in shadow mode. This allows you to log recommendations and audit their behavior in edge cases without exposing them to players, reducing the risk of regulatory violations.

Predictive Modeling for Player Behavior

Once you've established solid, compliant data practices, the next step is using predictive modeling to enhance player engagement. With high-quality data in hand, you can create models that anticipate player actions. These models are key for forecasting churn, improving engagement, and tailoring player experiences. The process involves selecting algorithms aligned with your objectives and continually refining them.

Choosing the Right Algorithms

If you're tackling churn prediction, start with Logistic Regression. It’s a great baseline because it’s straightforward to interpret, helping you identify the factors behind player attrition. After that, consider using ensemble models like Random Forest, which combine multiple decision trees to deliver more accurate predictions and minimize overfitting.

For sequential behaviors, such as tracking session flows or purchase trends, Recurrent Neural Networks (RNNs) or Long Short-Term Memory (LSTM) networks work well. They excel at capturing long-term patterns in player actions. If you need to predict not just whether an event will happen but when, Survival Analysis techniques - like the Cox Proportional Hazards Model - can pinpoint the likely churn windows for specific player groups. And for real-time decisions, such as optimizing rewards or timing special offers, Contextual Bandits can determine which incentives work best for particular player cohorts.

When selecting algorithms, it’s important to include design safeguards. For instance, Dynamic Difficulty Adjustment (DDA) should be carefully controlled in competitive or ranked modes to maintain fairness. As Lumenalta explains:

Bad DDA feels like rubber-banding, hidden cheating, or punishment for doing well.

Your algorithms should aim for fairness and long-term engagement, not just short-term performance spikes. By doing so, these models can predict player actions while also enabling adaptive, real-time gameplay.

Continuous Model Training and Optimization

Player behavior isn’t static - game updates, trends, and new content can shift patterns over time. To keep your models accurate, continuous training is essential. Set up automated machine learning (AutoML) pipelines to handle tasks like extracting insights, tuning hyperparameters, and deploying updates without manual effort.

Before rolling out new models, test them in shadow mode. This means running predictions in the background without exposing them to players, allowing you to evaluate performance against real-world scenarios and identify any unexpected issues. Use k-fold cross-validation to ensure your models generalize well. Additionally, monitor for data drift by setting up technical alerts and using tools like AWS X-Ray to trace functions and identify performance bottlenecks. This ensures your models deliver low-latency, real-time responses.

To refine your models further, incorporate both implicit feedback (like in-game behavior) and explicit feedback (such as surveys and reviews). For high-impact changes - like adjustments to the game economy or matchmaking - use staged rollouts with small player groups and holdout cohorts. This allows you to validate improvements before scaling up. By maintaining a cycle of updates, your predictive models can continually adapt to deliver personalized, dynamic experiences for players.

Personalization and Adaptive Gameplay Design

Adaptive gameplay, built on predictive modeling, now fine-tunes content to align with players' real-time behavior. This level of personalization has proven to boost both player engagement and revenue, with data revealing that more than 70% of gamers prefer experiences tailored to their preferences.

Tailoring Content to Player Preferences

What makes a game "better" depends on the player. Some thrive on achieving mastery and climbing competitive ranks, while others are drawn to storytelling or social interactions. By analyzing player behavior - like streaks in quest completions, average session length, or levels of social engagement - you can create recommendations that resonate with individual motivations.

Recommendation systems can suggest quests, items, or game modes based on a player’s past actions. For example, one game developer reorganized their in-game store using purchase history and engagement data, resulting in a 20% increase in purchase rates. Tools like contextual bandits can identify which incentives work best for different player groups, while clustering methods group players with similar behaviors to deliver content that feels relevant.

Case studies from games like Forza Motorsport and Minecraft offer valuable insights into refining recommendations and balancing challenges. When personalizing, prioritize these elements in order: clarity, pacing, rewards, social features, and monetization. This progression reduces friction for players, making them more receptive to purchases later. Strategies like "golden pathing" can align immediate recommendations - such as the next mission or item - with longer-term goals like completing the game or achieving a rank.

The key? Ensure these personalized features maintain a sense of fairness and control for players.

Maintaining Player Agency

While personalization enhances engagement, it’s crucial to protect player autonomy. Overstepping with intrusive or unfair adjustments can alienate your audience.

AI should work within a curated framework, selecting from pre-approved options rather than independently creating rules. This ensures the game stays true to its original vision and avoids harmful optimizations, like increasing frustration to push spending. A shared "minimum common experience" helps foster community discussions and supports content creators who rely on consistent game mechanics. Competitive and ranked modes should stick to fixed difficulty rules to ensure fairness, while Dynamic Difficulty Adjustment (DDA) can be reserved for onboarding or optional settings. However, if DDA feels like “rubber-banding” or “hidden cheating,” it risks eroding player trust.

Before rolling out AI-driven features, test them in "shadow mode", where predictions are logged but not yet applied to gameplay. This allows you to identify edge cases and refine the system. Staged testing with small groups and control cohorts can validate improvements, while rollback mechanisms ensure you can quickly undo changes that disrupt game balance. By blending personalization with transparency and control, you can craft adaptive experiences that players find both rewarding and fair.

Anomaly Detection and Behavioral Monitoring

Beyond personalized gameplay and predictive modeling, detecting anomalies is a key part of ensuring fair play in gaming. Swiftly identifying cheaters and bots keeps the gaming environment balanced and enjoyable for everyone. AI-driven anomaly detection processes vast amounts of match data daily, enabling real-time interventions instead of relying on delayed bans. Here’s a closer look at how AI identifies and addresses unusual gameplay patterns.

Detecting Cheating and Bot Activity

AI uses a variety of indicators to spot exploitative behavior. These include:

- Movement anomalies: Unusual actions like speed hacks, teleportation, or bypassing physics.

- Combat metrics: Unrealistic aiming speeds, impossibly precise accuracy, or reaction times that fall outside human limits.

- Input patterns: Signs like automated scripts, irregular click rates, low input entropy, or timing inconsistencies.

AI doesn’t just focus on gameplay. It also monitors network telemetry to detect latency spikes or packet manipulation and tracks economic signals for suspicious resource gains, which could indicate exploits. Additionally, graph neural networks analyze player interactions to uncover behaviors like collusion or account sharing.

One notable improvement in detection is the use of edge-first processing. For example, in 2026, a mid-sized studio implemented an edge-first anti-cheat system that reduced false positives by 42%. Detection latency dropped dramatically - from 8 seconds to under 350 milliseconds - thanks to serverless edge scoring functions. By summarizing features like timing histograms and input entropy locally on players’ devices, these systems also save bandwidth and safeguard privacy.

A layered defense strategy is essential. This combines client-side checks with server-side validation for critical actions like movement or damage. Tiered responses - such as warnings, temporary debuffs, or kicks - help minimize false positives while collecting evidence. To stay ahead of cheat developers, studios should define normal gameplay ranges using data from millions of sessions and regularly update detection signatures.

Using Synthetic Data for Improved Detection

AI detection systems are evolving to balance privacy concerns with effectiveness. With stricter privacy regulations in place by 2026, studios have adopted privacy-first methods. By processing personally identifiable information (PII) locally and sending only summarized behavioral data to servers, studios have reduced raw telemetry by over 90% while keeping detection accurate.

A two-tier validation pipeline can further enhance fairness. Automated systems handle clear-cut cases, while ambiguous or high-stakes situations - like tournament bans or professional player infractions - are escalated for manual review with detailed evidence. Running models in shadow mode before live deployment helps gauge false positive rates, and conservative thresholds at the edge prevent mass bans during unexpected gameplay spikes or outages.

Incorporating community feedback is another valuable layer. Signals from trusted creators or tournament organizers can help validate automated detections. Transparent appeal processes and limited replay data retention allow players to challenge decisions, ensuring fairness and accountability. This combination of AI and human oversight keeps detection systems both effective and equitable.

Measuring AI Performance and Optimization

To ensure AI delivers value to both players and the business, you need to measure its performance. Without solid metrics, it's impossible to confirm whether your AI improves retention or enhances the player experience.

Key Metrics for Evaluating Success

When implementing AI strategies, having clear metrics is crucial. One of the most telling indicators is retention rates. Day 1, Day 7, and Day 30 retention rates provide insight into whether your AI-driven personalization and difficulty adjustments are keeping players engaged. Even a modest 3% to 5% improvement in Day 1 retention can have a major impact, often outweighing the cost of acquiring new users. Considering there are over 212 million gamers in the U.S., small percentage gains can translate into massive results.

Another important measure is engagement signals, which reflect player satisfaction. On the business side, monetization metrics like Average Revenue Per Daily Active User (ARPDAU) and Customer Lifetime Value (CLTV) reveal whether AI personalization efforts are driving sustainable revenue growth.

Technical performance also plays a key role. Metrics such as crash rates and server latency directly influence how effectively recommendation algorithms promote your game. Additionally, a stickiness ratio (DAU/MAU) above 20% signals strong player engagement. For AI systems managing dynamic difficulty, tracking player deaths, time-to-fail, and recovery speed can help maintain an ideal "flow" state.

"Traditional analytics answers 'what happened'; predictive analytics answers 'what will likely happen next'." - Play-store.Cloud

These metrics confirm that AI-driven personalization can attract players while delivering engaging, dynamic gameplay experiences.

Addressing Model Bias and Imbalance

As player behavior shifts, continuous monitoring is essential to ensure AI systems remain effective and unbiased. Tools like AWS X-Ray and SageMaker Autopilot can help identify performance declines or emerging biases in your models. To reduce bias, consider using off-policy recommendations to gather new data and refresh your algorithms.

One common issue is the "rich-get-richer" effect, where recommendation systems amplify already popular content, leaving niche or new options overlooked. To combat this, an explore/exploit strategy can help balance recommendations between proven favorites and fresh, untested items. For example, simply reordering an in-game store based on engagement has been shown to increase purchase rates by 20%. However, constant monitoring is necessary to ensure new items remain visible.

Balanced scorecards are a useful tool to avoid focusing too narrowly on a single metric. Instead, track a mix of factors like engagement, player longevity, and community health. Regular audits of metadata, telemetry, and creator activity can help uncover biases in the signals driving your AI systems. Techniques such as A/B testing, canary releases, and feature flags provide additional safeguards to ensure your systems function as intended without introducing unintended or unfair outcomes.

"Personalization that feels unfair, creepy, or pay-to-win will backfire, even if short-term metrics spike." - Lumenalta

For critical decisions, human oversight is non-negotiable. While AI can process vast amounts of data, actions like player bans or major economic penalties should always involve human review. Consistent telemetry is also vital - treat it as a contract with stable event names and versioning, as inconsistent data is a leading cause of model failure. Lastly, establishing service level agreements between engineering and product teams ensures that data quality remains reliable.

Conclusion

Key Takeaways

Using AI to analyze player behavior requires a player-first mindset. The best systems focus on personalization as a core part of the game design, aiming for specific outcomes like improving mastery, advancing the story, or fostering social interactions. Subtle adjustments, or invisible adaptation, can keep players engaged without making them aware of constant changes.

Before diving into AI, it’s crucial to define clear goals. Pinpoint the specific needs your game fulfills and link them to measurable data, such as session duration or how often players return. Incorporating designer-authored guardrails ensures that AI works within defined boundaries, selecting from preapproved options rather than creating new rules on its own. This approach helps maintain game balance and player trust. Games that embrace intelligent behavior adjustments often report higher player satisfaction.

Privacy and fairness should always be front and center. Collect only the data necessary for personalization and monitor AI models closely to catch any signs of bias early. As Lumenalta cautions:

Personalization that feels unfair, creepy, or pay-to-win will backfire, even if short-term metrics spike.

Testing models in shadow mode and keeping human oversight in place for critical decisions are essential steps in this process.

By following these principles, adaptive gameplay can continue to evolve, enhancing the gaming experience without compromising trust or enjoyment.

Looking Ahead

The future of gaming is moving toward runtime world mutation, where game environments, rules, and events shift dynamically based on player behavior models. By April 2026, AI-driven NPCs are expected to generate over 70% of unique player narratives per session, while emotional modeling could boost retention rates by 2.5 times compared to traditional logic-based systems.

Emerging trends include cross-game learning, where AI learns a player’s style across multiple games to offer personalized experiences right from the start. Another exciting development is AI emotional intelligence, which can analyze in-game actions and even voice tone to adjust the narrative or difficulty based on the player’s mood. With over 212 million gamers in the U.S., even small improvements in personalization can lead to massive results at scale. The challenge lies in balancing cutting-edge innovation with a seamless, enjoyable experience - creating adaptive worlds that feel engaging without undermining player freedom or trust.

FAQs

What data should I track for player behavior AI?

Tracking player behavior data is crucial for understanding how users interact with your game. Focus on key areas like engagement metrics, retention rates, monetization activities, and gameplay patterns. These insights help paint a clear picture of player habits and preferences.

To take it a step further, include predictive indicators such as churn likelihood and player preferences. These elements allow AI-driven tools to analyze trends more effectively, giving you the chance to refine game design and improve the overall player experience. By combining current behavior data with predictive analytics, you can make smarter decisions that keep players more engaged and invested.

How do I use personalization without feeling creepy or unfair?

To ethically analyze player behavior, prioritize transparency, ensure players have control, and handle data with care. Be fair by setting clear goals, tracking progress or challenges, and using AI responsibly. Steer clear of invasive techniques or surprising interventions. Instead, rely on respectful, privacy-conscious AI approaches that foster trust and allow players to feel secure and in charge of their experience.

How can I reduce AI false positives in cheat detection?

To cut down on false positives in AI-based cheat detection, it's crucial to focus on advanced behavior analysis and smarter detection systems. These tools help distinguish between genuine player actions and cheating. Using AI-driven real-time behavior analysis alongside machine learning models - such as graph neural networks - can significantly boost accuracy. Pairing AI with statistical analysis, server-side checks, and real-time monitoring creates a more reliable system, reducing the chances of wrongly flagging legitimate players.