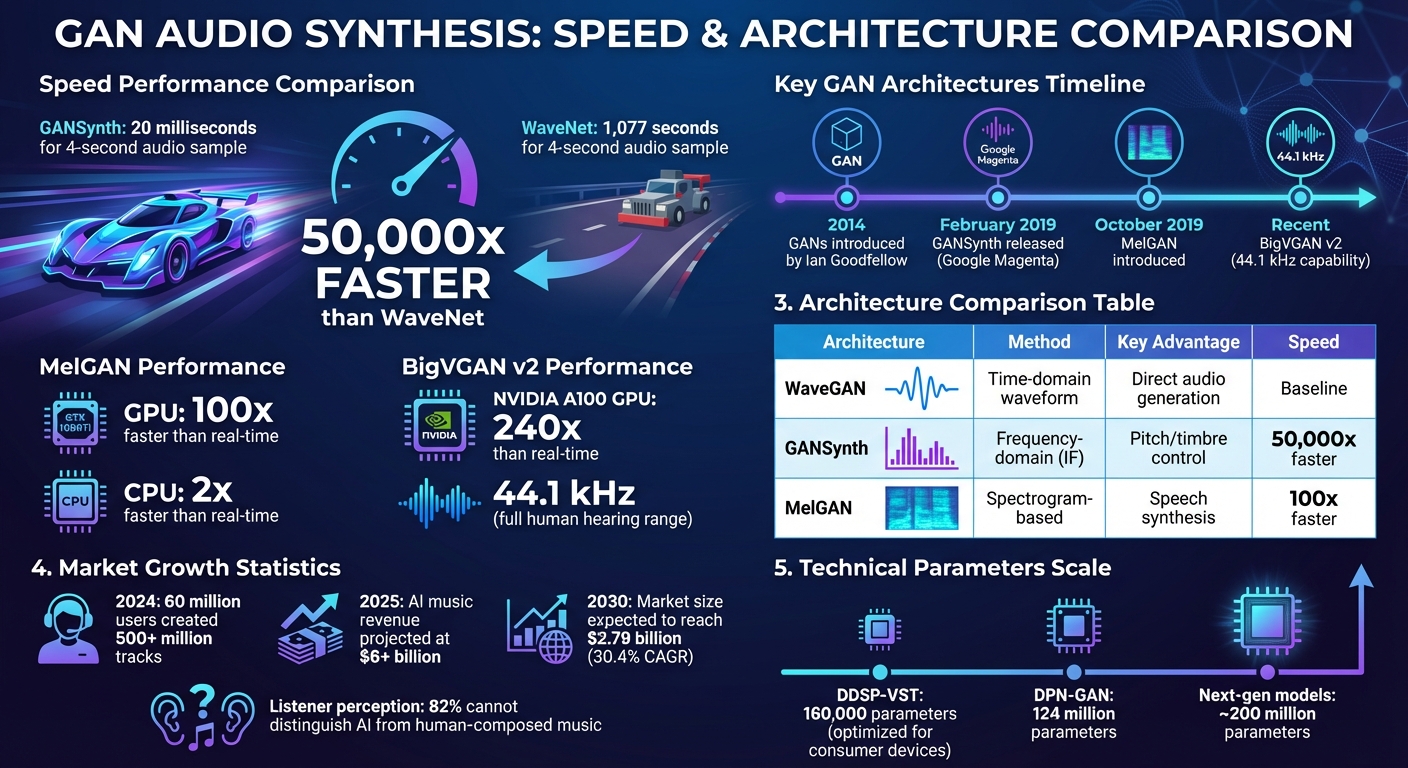

Generative Adversarial Networks (GANs) are reshaping audio synthesis by offering faster, more efficient ways to produce high-quality sounds. Unlike older models like WaveNet, which generate audio one sample at a time, GANs create entire audio sequences simultaneously. This approach drastically improves speed - up to 50,000 times faster than traditional methods - and allows for better control over global features like pitch and timbre.

Key advancements include:

- GANSynth: Introduced in 2019, it synthesizes audio faster than real-time while maintaining excellent sound quality.

- MelGAN: Converts spectrograms into audio at speeds over 100x faster than real-time on GPUs.

- BigVGAN v2: Capable of generating audio at 44.1 kHz, covering the full range of human hearing.

Challenges like phase alignment and training instability have been addressed through techniques like Wasserstein GAN, Phase Shuffle, and improved architectures. These innovations are transforming music production, sound design, and AI content generator tools and real-time audio tools, making them faster and more accessible.

GANs are now being combined with other AI technologies, such as Transformers, to further enhance audio synthesis capabilities. With the market for AI-generated music expected to grow rapidly, GANs are setting new standards for speed, quality, and control in sound creation.

GAN Audio Synthesis Speed Comparison: GANSynth vs WaveNet Performance

What Are Generative Adversarial Networks (GANs)?

GAN Basics and How They Work

Generative Adversarial Networks (GANs) are a type of deep learning framework where two neural networks - called the generator and the discriminator - compete to create data that mimics real-world samples. Introduced by Ian Goodfellow and his team in June 2014, GANs have made a huge impact in the field of machine learning. Here's how they work: the generator starts by creating data samples (like audio) from random noise taken from a latent space. Meanwhile, the discriminator evaluates these samples to decide whether they are real (from the training dataset) or fake (generated by the model). Through this process, the two networks improve iteratively - the generator gets better at "fooling" the discriminator, while the discriminator sharpens its ability to distinguish real from fake.

"The core idea of a GAN is based on the 'indirect' training through the discriminator... the generator is not trained to minimize the distance to a specific image, but rather to fool the discriminator." – Wikipedia

The ultimate goal is to reach a point where the generator's outputs are so realistic that the discriminator can no longer reliably tell the difference between real and generated data. This dynamic interaction is what makes GANs particularly effective for tasks like audio synthesis.

Why GANs Work Well for Audio Synthesis

GANs are particularly well-suited for audio synthesis for several reasons. Unlike autoregressive models such as WaveNet, which generate audio one sample at a time in a slow, sequential manner, GANs can produce entire audio sequences in parallel. This eliminates the bottleneck caused by step-by-step generation.

Another key strength of GANs lies in their ability to capture and manipulate global audio features. By generating audio from a single latent vector, GANs allow users to control broad characteristics like pitch and timbre more effectively. This approach simplifies global adjustments and ensures that musical structures remain coherent - something that autoregressive models often struggle with.

Efficiency is another major advantage. GANs use non-autoregressive, fully convolutional architectures, which means they don't need specialized kernels or complex distillation techniques to achieve real-time performance. For instance, MelGAN demonstrates how efficient GANs can be, running over 100 times faster than real time on a GTX 1080Ti GPU and more than twice as fast on an average CPU. Considering that CD-quality audio demands between 16,000 and 44,100 samples per second, this level of efficiency is crucial for practical applications.

sbb-itb-a759a2a

Challenges and Solutions in GAN-Based Audio Synthesis

Main Challenges of Audio Synthesis with GANs

Creating audio with GANs is no small feat - it’s a task that demands far more computational power than image generation. Just a few seconds of raw audio can contain hundreds of thousands of samples. For CD-quality audio, you're looking at 16,000 samples per second, which places a massive strain on processing resources compared to typical image-related tasks.

One major hurdle is phase alignment. High-quality audio depends on maintaining precise phase relationships between frequency components. When these relationships falter, the result is distorted or artifact-ridden audio. Jesse Engel from Google Magenta explains it well: “Upsampling convolution struggles with phase alignment for highly periodic signals”. This issue, known as phase precession, arises because the alignment between the start of a frame and the waveform shifts over time.

Another challenge is training instability, a common issue with GANs. These models are prone to problems like mode collapse and difficulties with convergence. Techniques like Wasserstein GAN with Gradient Penalty (WGAN-GP) have been developed to stabilize the training process. On top of that, transposed convolutions can introduce checkerboard artifacts, while standard convolutions often struggle to capture long-range dependencies, making it harder to produce coherent melodies or natural-sounding speech.

Technical Advances in GAN Performance

These challenges have driven researchers to develop innovative solutions. One standout example is GANSynth, introduced in February 2019 by a team from Google Magenta, including Jesse Engel and Adam Roberts. GANSynth tackled phase instability by generating instantaneous frequencies instead of raw phase components. Using the NSynth dataset, the team achieved high-quality audio with independent control over pitch and timbre. Impressively, GANSynth outperformed WaveNet baselines in human evaluations and synthesized audio about 50,000 times faster than traditional autoregressive models.

To combat artifacts caused by standard convolutions, researchers have refined GAN architectures. For instance, WaveGAN introduced a technique called Phase Shuffle, which randomly shifts activations to prevent the discriminator from overfitting to checkerboard artifacts. More recent models, like DPN-GAN, use periodic activation functions - such as kernel-based periodic ReLU - to better capture intricate, repetitive audio patterns. Some of these models have scaled up to 124 million parameters, allowing them to handle diverse datasets for both speech and music generation.

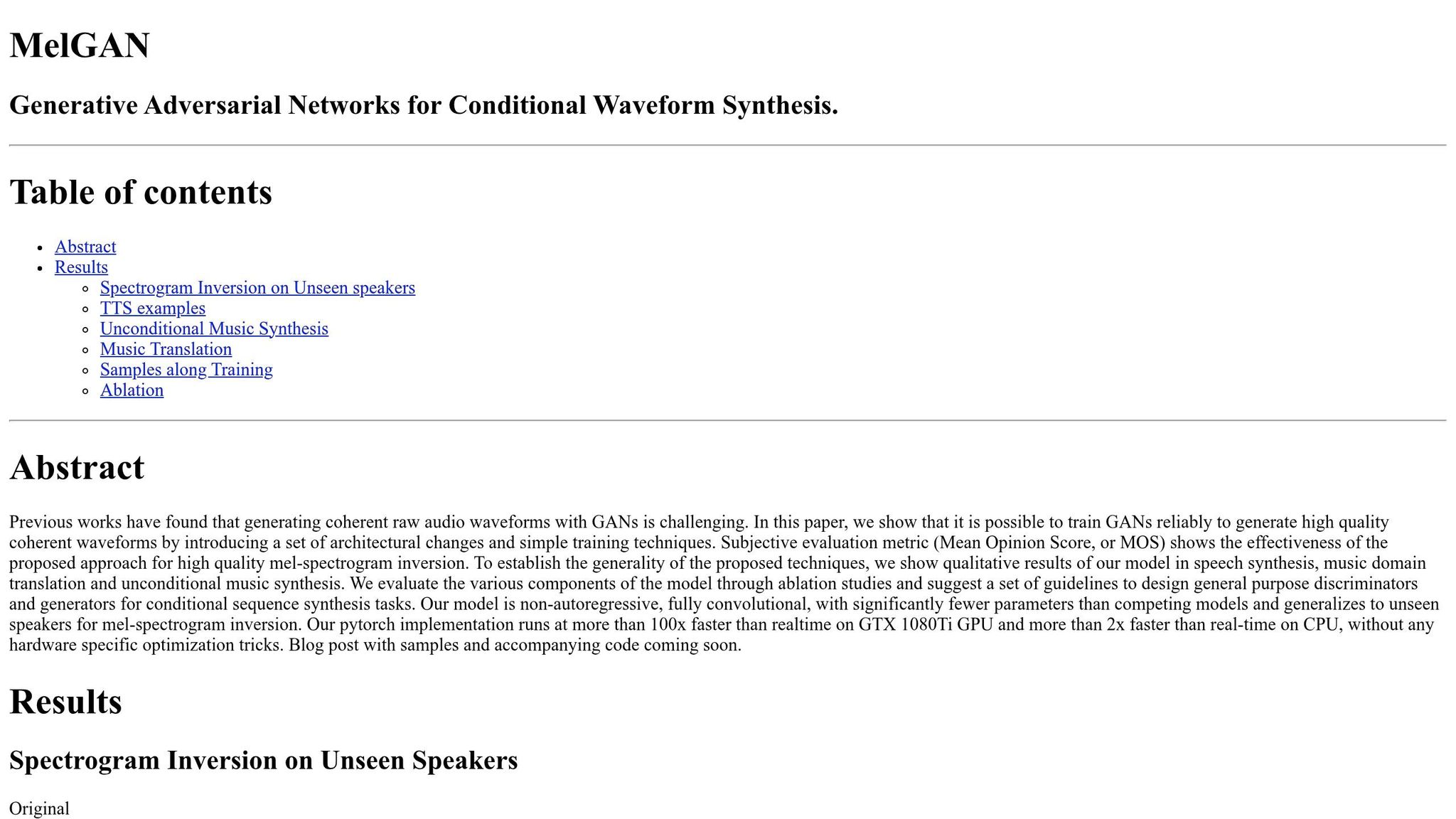

Another milestone came in October 2019 with the introduction of MelGAN by a team including Yoshua Bengio and Kundan Kumar. MelGAN demonstrated that GANs could reliably generate high-quality, coherent waveforms by incorporating specific architectural tweaks and straightforward training techniques. Its non-autoregressive, fully convolutional design, combined with multi-scale discriminators, made it possible to invert mel-spectrograms into audio at speeds 100 times faster than real-time on a GTX 1080Ti GPU. MelGAN also excelled at generalizing to unseen speakers, thanks to features like multi-scale discriminators and spectral energy-based loss scaling, which directly addressed issues with training stability and phase alignment. These improvements helped the model handle complex audio characteristics across varying volume levels.

Key GAN Architectures for Audio Synthesis

WaveGAN: Direct Audio Waveform Generation

WaveGAN focuses on creating raw time-domain audio by generating waveforms sample by sample. However, its reliance on upsampling convolutions introduces a problem known as phase precession. This issue causes the alignment between the start of a frame and the wave cycle to shift over time, leading to blurry and inconsistent outputs, particularly with highly periodic sounds like music. These challenges have prompted researchers to explore frequency-based and spectrogram-based GANs as alternatives.

GANSynth: Frequency-Based Synthesis

GANSynth works in the frequency domain and was introduced in February 2019 by Jesse Engel and the Google Magenta team. It uses a Progressive GAN structure to generate instantaneous frequency (IF) representations, addressing the phase coherence problems commonly found in time-domain models. This method also provides better control over pitch and timbre, making it highly effective for musical applications.

"For highly periodic sounds, like those found in music, GANs that generate instantaneous frequency (IF) for the phase component outperform other representations and strong baselines" – Jesse Engel, Research Scientist at Google AI

One of GANSynth's standout features is its use of a single global latent vector rather than time-distributed codes. This allows it to disentangle pitch and timbre across an entire audio clip, making it ideal for tasks like musical instrument synthesis and timbre interpolation. Additionally, GANSynth generates entire audio sequences in parallel, achieving speeds far beyond real-time processing on modern GPUs - approximately 50,000 times faster than a standard WaveNet.

While GANSynth shines in creating musical sounds, spectrogram-based approaches have shown strengths in speech synthesis and other audio applications.

MelGAN and SpecGAN: Spectrogram-Based Approaches

MelGAN and SpecGAN generate audio by working with spectrogram representations. MelGAN, for instance, converts mel-spectrograms back into audio using a non-autoregressive, fully convolutional design. This architecture allows it to operate over 100 times faster than real-time on a GTX 1080Ti GPU and more than twice as fast on a CPU.

"Previous works have found that generating coherent raw audio waveforms with GANs is challenging. In this paper, we show that it is possible to train GANs reliably to generate high quality coherent waveforms by introducing a set of architectural changes and simple training techniques" – Kundan Kumar et al.

MelGAN is particularly well-suited for speech synthesis, excelling in its ability to generalize to new, unseen speakers. On the other hand, SpecGAN enhances audio generation by using mel-scaling for spectrograms and increasing frequency resolution. This approach improves both the quality and diversity of the generated audio outputs.

How GANs Are Used in Music and Sound Design

Music Composition and Production

GANs are changing the way music is created and produced, offering tools that make workflows faster and more dynamic. For example, GANSynth can generate a four-second audio sample in just 20 milliseconds, compared to the 1,077 seconds needed by WaveNet. This speed allows composers to work in real-time, enabling them to experiment and refine their ideas more efficiently.

With GANs' ability to control global features like pitch and timbre, composers can explore unique sound transitions. Using techniques like spherical interpolation in the latent space, they can morph between instruments - such as transitioning from the sound of a flute to a trumpet - without the usual glitches or artifacts found in other models. Many artists now see GANs as creative collaborators, using them to produce unexpected sounds and then refining the results. As Holly Herndon, a musician and artist, puts it:

"It's not about replacing humans, it's about augmenting human expression".

Sound Effects and Foley Design

GANs are also making waves in sound effects and foley design, particularly for movies and video games. Advanced architectures like BigVGAN v2 can create realistic environmental sounds at sampling rates up to 44 kHz, covering the full range of human hearing. This includes replicating intricate details, such as the shimmering resonance of a crash cymbal, which were previously difficult to achieve with other methods.

The speed of these models is a game-changer for creators working under tight deadlines. Optimized GANs can generate audio waveforms up to 240 times faster than real-time on a single NVIDIA A100 GPU. Additionally, BigVGAN v2 was trained on datasets over 100 times larger than its predecessor, allowing it to handle a wide variety of audio types, from multi-language speech to environmental sounds. NVIDIA researchers Sang-gil Lee and Rafael Valle highlight its versatility:

"BigVGAN... shows strong robustness with various audio types, including speech, environmental sounds, and music".

These advancements are making high-quality, AI-driven audio tools more accessible than ever.

AI-Powered Tools for Content Creators

Today, several tools bring the power of GAN-based audio synthesis into the hands of creators. One example is DDSP-VST, a neural synthesizer plugin that works in real-time within Digital Audio Workstations (DAWs). It features "Tone Transfer", which can transform inputs like a voice into the sound of various musical instruments. By using a simplified pitch detection model with just 160,000 parameters - 137 times fewer than the original - it’s optimized for consumer devices.

Another tool, MIDI-DDSP, offers detailed control over note-level expressions such as vibrato, brightness, and attack noise. Models trained with GAN objectives have shown improved sound quality. Platforms like the Magenta web trainer also allow users to train custom neural synthesis models with only a few minutes of audio data. This means artists can create personalized sounds with minimal resources, bridging the gap between advanced GAN technology and practical, creative applications.

What's Next for GAN-Based Audio Synthesis

Better Realism and Customization

The next wave of GANs, like BigVGAN v2, now operates at 44.1 kHz, covering the entire range of human hearing. NVIDIA researchers Sang-gil Lee and Rafael Valle highlight its impact:

"The release of BigVGAN v2 pushes neural vocoder technology and audio quality to new heights, even reaching the limits of human auditory perception".

Future systems, such as Flow2GAN, are introducing innovative methods to enhance both speed and quality. By combining Flow Matching for stability with GAN fine-tuning, these models can generate high-quality audio in just 1–4 steps, a significant leap from the numerous steps required by traditional diffusion models. They also utilize advanced activation functions like "Snake" or periodic ReLU, which better capture the natural waveforms of sound. On top of this, scaling up architectures to around 200 million parameters allows these systems to reconstruct high-frequency details that were once unattainable.

The market for generative AI in music reflects this rapid growth. By 2030, it’s expected to reach $2.79 billion, growing at an annual rate of 30.4%. AI-generated music revenue alone could surpass $6 billion by 2025. In 2024, approximately 60 million users leveraged AI tools to create over 500 million tracks. Blind acoustic tests even revealed that 82% of listeners couldn’t distinguish between music composed by humans and AI.

These advancements are paving the way for GANs to integrate with other AI technologies, unlocking even more possibilities.

Combining GANs with Other AI Technologies

As hardware and algorithms evolve, GANs are increasingly being paired with other AI frameworks to push the boundaries of audio synthesis. Hybrid systems that integrate Transformers for long-range dependencies with RNNs for managing temporal dynamics, alongside GAN decoders, are driving progress. For example, Google DeepMind's NotebookLM Audio Overviews can generate two minutes of multi-speaker dialogue in under three seconds using a TPU v5e. Meanwhile, RNN-GAN combinations are refining temporal consistency even further. Researchers Zalán Borsos, Matt Sharifi, and Marco Tagliasacchi explain:

"AudioLM treats audio generation as a language modeling task to produce the acoustic tokens of codecs like SoundStream... making it a good candidate for modeling multi-speaker dialogues".

These hybrid systems, much like earlier GAN innovations, are enhancing both speed and control. Professional tools are already incorporating these advancements. Logic Pro 11 and Ableton Live 12 now include AI-powered features like stem separation, mastering assistance, and generative MIDI transformations. This integration is making AI-assisted audio creation more accessible for creators.

Looking ahead, there’s a shift toward semantic audio synthesis. Tools are emerging that allow creators to use natural language prompts instead of traditional controls like knobs and faders. TunePrompts Research captures this vision:

"The future of sound belongs entirely to those who can articulate their imagination with precise linguistic clarity".

In this evolving landscape, creators who can clearly communicate their artistic vision through language will be at the forefront of the next era in sound.

WaveGAN Explained!

Conclusion

GANs have transformed audio synthesis by generating complete sequences all at once, achieving speeds that far surpass traditional methods like WaveNet. Modern IF-Mel GANs have demonstrated dramatic improvements in performance, setting a new standard for efficiency and quality.

One standout advancement is the introduction of global latent control. With this, a single latent vector allows for independent adjustments to attributes such as pitch and timbre, ensuring consistent audio characteristics across an entire clip. Jesse Engel, a Research Scientist at Google AI, highlighted the potential of this technology:

"This work opens up the intriguing possibility for realtime neural network audio synthesis on device, allowing users to explore a much broader pallete of expressive sounds".

These innovations have paved the way for audio fidelity at levels previously thought unattainable. From WaveGAN's direct waveform generation to GANSynth's frequency-based synthesis, and now to DPN-GAN models with up to 124 million parameters, each step has pushed the boundaries further. Today’s systems can operate at 44 kHz and achieve speeds up to 240 times faster than real time on high-end GPUs. As GANs continue to evolve and merge with cutting-edge AI technologies, they are poised to redefine what’s possible in music and sound design.

FAQs

Do GAN-based audio models need a GPU to run in real time?

Yes, GAN-based audio models typically need a GPU to operate in real time. This is because generating high-quality, seamless audio demands significant computational power. For instance, models like GANSynth use parallel sampling on GPUs to deliver the necessary performance.

How do GAN vocoders avoid phase artifacts and 'buzzy' audio?

GAN vocoders tackle phase artifacts and "buzzy" audio by incorporating phase-aware modeling techniques. These techniques include anti-aliased twin deconvolution modules, which help reduce aliasing, and multi-resolution real and imaginary loss functions, which refine spectral detail and improve phase reconstruction. By combining these methods, GAN vocoders deliver higher-quality audio with more natural sound synthesis.

When should I use GANSynth vs MelGAN for a project?

GANSynth is designed for creating high-quality musical sounds in the spectral domain. It stands out for producing realistic tones with intricate details, making it a great choice for tasks that demand precision and consistency in musical synthesis.

On the flip side, MelGAN is tailored for fast, real-time applications such as speech synthesis and spectrogram inversion. Its ability to generate raw waveforms quickly makes it perfect for scenarios where efficiency and simplicity are key.