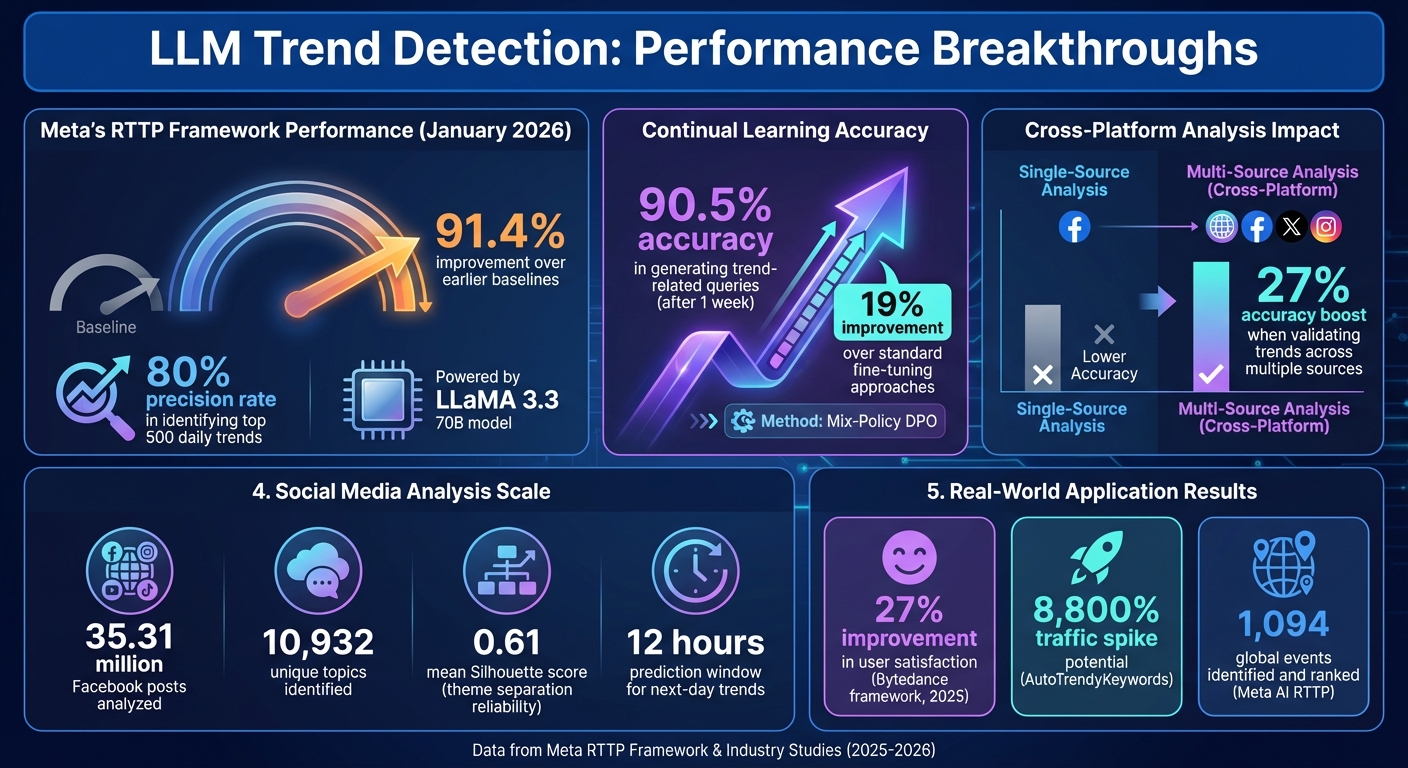

Large Language Models (LLMs) are changing how trends are identified online. Unlike older methods that rely on tracking viral keywords or hashtags, LLMs analyze context, predict emerging topics, and detect subtle patterns early. For example, Meta's RTTP framework, powered by the LLaMA 3.3 model, improved trend detection accuracy by 91.4% in January 2026. These systems process vast amounts of data, predict topic momentum, and even analyze sentiment to forecast trends that will gain traction. They also work across languages and platforms, helping businesses and creators stay ahead.

Key Points:

- LLMs predict trends earlier by analyzing context, not just keywords.

- Models like Meta's RTTP and Gemini 3.0 achieve high precision in detecting emerging topics.

- Sentiment analysis helps identify trends with lasting impact versus short-lived ones.

- Multilingual capabilities allow global trend analysis, breaking language barriers.

- Challenges include biases in training data and maintaining data quality.

These advancements are reshaping content strategies, from social media to SEO with AI tools, enabling creators to act on trends before they peak.

AI & LLM Visibility: A Practical Guide for Ranking in AI Results

sbb-itb-a759a2a

How LLMs Improve Trend Detection

LLM Trend Detection Performance Metrics and Improvements

LLMs don’t just track mentions - they dig deeper to understand why topics resonate. By leveraging transformer architecture and attention mechanisms, they highlight context-specific words and phrases, uncovering subtle patterns that basic keyword tools might miss.

Data Processing and Pattern Recognition

The real game-changer lies in how LLMs process data before trends become obvious. Instead of waiting for search volumes to spike, advanced AI tools for blog content creation like Meta's RTTP framework create search queries from new content - whether it’s social media posts, news articles, or discussions. This synthetic signal generation addresses the cold-start problem and allows for earlier trend detection.

In January 2026, Meta's RTTP framework, powered by the LLaMA 3.3 70B model, began converting Facebook and Meta AI posts into search queries. By factoring in creator authority (follower counts, verification status) and engagement strength (comments and shares over likes), the system achieved an 80% precision rate in identifying the top 500 daily trends. This marked a 91.4% improvement over earlier baselines.

LLMs excel at filtering noise through Named Entity Recognition (NER), tracking momentum with temporal analysis, and interpreting the "why" behind data patterns. This hybrid approach makes them particularly effective in low-traffic environments - places where tools like Google Trends struggle due to insufficient query volume.

To keep up with shifting interests, LLMs use continual learning techniques. For instance, Mix-Policy DPO prevents catastrophic forgetting, allowing models to retain older knowledge while adapting to new topics. After just one week, this method maintained 90.5% accuracy in generating trend-related queries, a 19% improvement over standard fine-tuning approaches.

Sentiment Analysis and Popularity Forecasting

LLMs don’t stop at identifying trends - they also analyze emotional tone. Understanding how people feel about a topic is just as important as knowing what they’re talking about. By assessing emotional intensity, LLMs can predict which topics are likely to go viral.

This analysis goes beyond simple positive or negative classifications. Emotional tracking sharpens momentum forecasting, helping LLMs distinguish between fleeting viral moments and trends with lasting impact. They also monitor how trends spread across platforms - moving from niche spaces like Reddit or TikTok to mainstream outlets - allowing for more accurate predictions of when a trend will peak.

Modern forecasting models prioritize "deep engagement" (comments, reshares) over "shallow engagement" (likes), offering a clearer picture of audience investment. This weighting system improves momentum predictions significantly. Cross-platform analysis further enhances precision - validating trends across social media, news outlets, and search data can boost accuracy by up to 27% compared to single-source analysis.

"The integration of Large Language Models (LLMs) with topic modeling represents a significant advancement... LLMs capture contextual relationships and semantic nuances that bag-of-words approaches miss." - Aron Hack

Multilingual and Global Trend Insights

LLMs also break language barriers, unlocking insights on a global scale. By combining automated language detection with machine translation, they analyze web signals - social media posts, news articles, and reviews - across multiple languages. This ensures global patterns aren’t siloed.

But it’s more than just translation. LLMs like Command R+ and LLaMA 3.3-70B-Instruct generate contextually relevant event keywords across domains like technology, health, and sports. They also perform cross-cultural semantic analysis, uncovering the deeper meanings and social cues behind trends to understand why certain topics resonate with specific demographics or regions.

Geographic hotspot identification becomes possible through normalized indices of search interest and scraped data. LLMs can pinpoint where a trend is gaining traction - whether in India, Brazil, or Germany - helping creators tailor localized strategies. With the ability to process context windows exceeding 100,000 tokens, modern LLMs analyze months of longitudinal trend data instead of focusing solely on recent activity.

A 2026 study on Meta AI’s RTTP framework showcased these multilingual capabilities, with LLMs identifying and ranking over 1,094 global events from Wikipedia data spanning 2020 to 2024. Such large-scale analysis would be unmanageable with traditional online content writing tools.

"Advancements in multilingual LLMs promise to bridge these gaps... This capability will be indispensable for brands navigating diverse markets with varying cultural norms and sensitivities." - Averi Academy

Applications of LLMs in Trend Detection

LLMs have transformed how we detect and act on online trends. By analyzing context, sentiment, and timing, these models provide powerful tools for improving social media strategies and SEO practices.

Social Media Trend Analysis

Social media platforms produce enormous amounts of unstructured data daily. LLMs step in to make sense of this chaos, translating slang, emojis, and even sarcasm into meaningful insights - something traditional keyword tracking tools struggle to achieve.

One of the standout benefits of LLMs is their ability to predict trends early. For example, research analyzing 35.31 million Facebook posts identified 10,932 unique topics, creating a predictive model with a mean Silhouette score of 0.61, ensuring reliable theme separation. This capability allows LLMs to forecast tomorrow’s trending topics within just 12 hours.

In 2025, Bytedance researchers, including Kaichun Wang and Yanguang Chen, introduced a multi-stage hotspots detection framework for conversational AI. This system used generative query indexing to connect static events with dynamic user queries. Online A/B tests revealed a 27% improvement in user satisfaction, measured by positive-negative feedback ratios, compared to older search engine methods.

Another game-changing feature is cross-platform migration tracking. LLMs monitor how topics spread across different networks, measuring not just the volume of engagement but its speed. This "velocity tracking" helps identify which topics are poised to go mainstream. Tools like Gemini 3.0 take this a step further by using "search grounding" to analyze trends that are just minutes old.

These advanced capabilities in social media analysis also create new opportunities for SEO strategies.

Content Optimization for SEO

LLMs are pushing SEO beyond the traditional reactive approach of chasing popular keywords. Instead, they enable a forward-thinking strategy by predicting which keywords will gain traction. Systems like AutoTrendyKeywords integrate real-time data from Google Trends and social platforms to continuously update SEO keywords.

For example, AutoTrendyKeywords, built with Llama 3.1 (70B) and Google Trends data, automates the creation of optimized metadata, long-tail keywords, and dynamic URL paths. This approach has driven traffic spikes of up to 8,800%, capturing niche audiences effectively.

| Feature | Traditional SEO | LLM-Powered SEO |

|---|---|---|

| Keyword Selection | Manual, based on historical data | Automated, based on real-time trends |

| Adaptability | Slow; requires dedicated teams | Instant; adjusts to viral shifts 24/7 |

| Context | Keyword-matching | Semantic understanding and intent |

| URL Structure | Static paths | Dynamic, keyword-rich paths |

For content creators using tools like the AI Blog Generator Directory, these advancements mean staying ahead of search trends without constant manual effort. Thanks to live search grounding, LLMs ensure content remains accurate and up-to-date, avoiding errors or outdated information.

Challenges and Limitations of LLMs in Trend Detection

LLMs face several obstacles when it comes to identifying content trends, often due to the quality of their training data and inherent biases within their architecture.

Bias and Ethical Considerations

LLMs don’t just reflect existing biases - they can create new ones. Research has found that these models may develop "novel social biases" when their training data fails to represent diverse demographics. This lack of representation results in skewed patterns that can persist in trend analysis.

The political and ideological leanings of different models also vary significantly. For instance, Gemini tends to lean right, while GPT skews left. This means the same politically charged trend could be classified differently depending on the model. Additionally, studies of news generation revealed troubling patterns. Grover, for example, exhibited bias against women in 73.89% of its content. It also increased the use of White-race-specific words by 20.07% while reducing Black-race-specific words by 11.74%.

"LLMs are not merely passive mirrors of human social biases, but can actively create new ones from experience." - Addison J. Wu, Researcher

Geographic biases add another layer of complexity. Some models align more with Latin American perspectives in simulated UN votes, while others show strong disagreement with nations like the US or China. When trends intersect multiple demographic factors - such as gender and race - models often falter. A study involving 46,781 test entries found that around 12% of biased instances targeted multiple demographic axes. Even fine-tuned models exhibited persistent performance gaps across these categories. Such biases distort trend analysis, potentially misrepresenting key signals.

These issues highlight how bias, both inherited and newly created, can undermine the reliability of LLMs in detecting trends. But bias isn’t the only challenge - data quality plays an equally critical role.

Dependence on Data Quality

The quality of training data has a direct impact on a model's precision. Noisy or flawed data can significantly reduce accuracy. For example, one study showed that precision dropped from 89% to 72% when training data was poorly curated. This leads to unreliable insights and missed opportunities to identify trends.

In September 2024, researchers from Amazon and the Technology Innovation Institute analyzed the ToolBench dataset, which contained 125,000 instances. They found that over 33% had parameter alignment errors. After filtering for high-quality data, they trained a model on just 10,000 clean instances, achieving a 0.54 pass rate - outperforming the original model trained on 73,000 unvalidated instances, which only managed a 0.45 pass rate.

"Even a small amount of malicious or poor quality training data can have a massively negative impact on a model's performance, exposing users to significant security and quality issues." - Tariq Shaukat, CEO, Sonar

Poor data quality also leads to hallucinated trends. When training data includes unverified or contradictory information, LLMs may generate fabricated statistics or references that seem convincing but are entirely false.

The vast scale of modern LLMs makes maintaining data quality even harder. For example, Meta's Llama 2 was trained on 1.8 trillion tokens, while Llama 3.1 scaled up to 15.6 trillion tokens. At such massive scales, automated curation becomes necessary, but even AI-driven tools struggle to catch all inconsistencies, toxic patterns, or mislabeled data. These challenges directly affect the accuracy of trend detection, leading to unreliable insights and missed opportunities.

Conclusion and Future of LLM-Powered Trend Detection

Key Takeaways

Large Language Models (LLMs) have reshaped how content creators and marketers identify trends. Unlike older methods that relied on waiting for search volume surges, LLMs generate synthetic signals from emerging content, tackling the challenge of spotting trends in their earliest stages. A standout example is Meta's RTTP framework, which, as of January 2026, demonstrated a 91.4% improvement in identifying low-traffic "tail" trends compared to traditional approaches.

For creators using platforms like the AI Blog Generator Directory, this means quicker insights and more precise forecasting. LLMs transform raw data into straightforward, actionable insights, making trend analysis accessible even for those without a background in data science. This marks a shift from reactive social listening to predictive content creation, a movement that's already gaining traction.

These advancements are just the beginning, setting the stage for even more dynamic developments in trend detection.

Future Developments in LLM Trend Detection

The future of trend detection promises even greater innovation. One major step forward is the adoption of systems that update daily through continual learning. For instance, Meta's Mix-Policy DPO achieved 90.5% query generation accuracy over four weeks, reflecting a 19% improvement.

Real-time grounding is becoming a standard feature. Advanced models like Gemini 3.0 access data that's only minutes old, effectively solving the issue of training cutoffs. With context windows exceeding 100,000 tokens, these systems can analyze months of data in a single query. The integration of multimodal capabilities - like interpreting charts, images, and videos - takes trend detection to a whole new level.

A quote from Stormy AI underscores this transformation:

"Gemini 3.0 has this capability to bring whatever idea you have to life... it's about helping you bring anything to life by leveraging world-class intelligence." - Stormy AI

Pricing has also become more accessible. Gemini 3.0 Pro, for example, costs around $2 per million input tokens and $12 per million output tokens, making high-volume research feasible even for smaller agencies. As these tools continue to evolve, the focus will likely shift from merely identifying trends to understanding their trajectories and creating content that anticipates audience needs before they even realize them.

FAQs

How do LLMs spot trends before hashtags or search volume spike?

Large language models (LLMs) excel at spotting trends early by diving into real-time content streams like news articles, social media posts, and online forums. By analyzing this digital chatter, they can identify subtle shifts in conversations and behaviors. What sets them apart is their ability to create synthetic search signals - essentially interpreting patterns in the data to predict trends before traditional search volumes reflect them. This gives organizations a head start, allowing them to act on emerging topics before they gain widespread attention. In short, LLMs turn messy, unstructured data into clear, actionable insights for forecasting trends.

How can I reduce bias and hallucinated “trends” in LLM outputs?

To reduce bias and avoid fabricated trends in outputs from large language models (LLMs), focus on factual fine-tuning to align responses with verified data. Techniques like direct preference optimization can also help steer outputs toward accuracy. Use strategies such as uncertainty estimation, attention pattern analysis, and self-consistency checks to identify and address inaccuracies. Additionally, incorporating retrieval-augmented generation and external fact-checking tools can help ensure the information provided is based on trustworthy sources.

What data sources should I combine for more accurate trend forecasts?

To refine trend forecasts, use a mix of data sources like web scraping signals, search volume metrics (such as Google Trends), social media conversations, news articles, and large text datasets. Pairing these inputs with large language models (LLMs) improves precision by offering deeper contextual analysis, spotting subtle patterns, and assessing the impact of worldwide events.