Understanding your audience is the key to creating impactful content and driving results. This guide reveals how AI can transform audience analysis, helping you uncover patterns, predict behaviors, and personalize interactions. Here’s what you’ll learn:

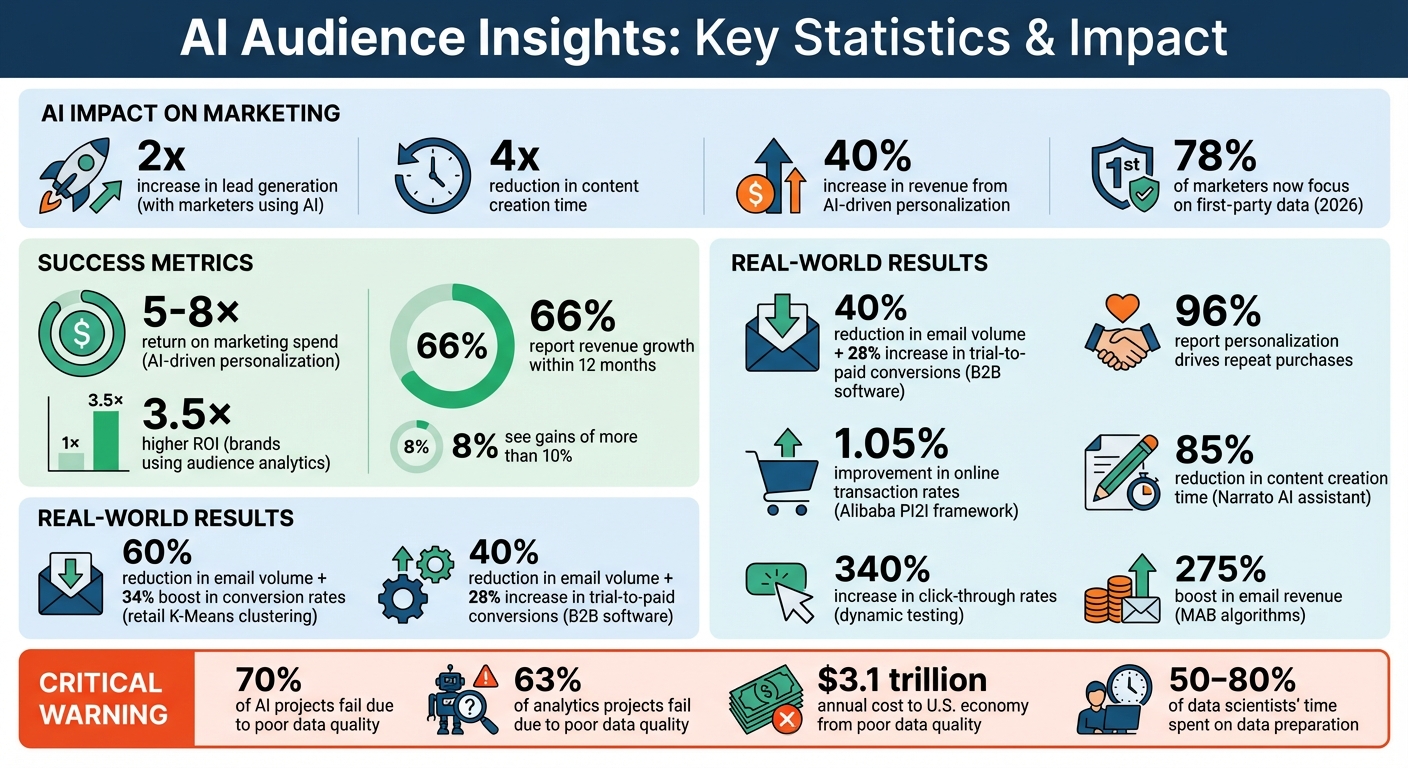

- AI boosts results: Marketers using AI see a 2x increase in lead generation and a 4x reduction in content creation time.

- Behavioral insights matter: These go beyond demographics to explain why users act, helping you create tailored strategies.

- AI tools in action: Techniques like clustering, collaborative filtering, and decision trees deliver real-time insights and improve customer journeys.

- Data preparation is critical: Clean, structured data is essential for AI success, and privacy laws like CPRA demand compliance.

- Practical applications: AI-driven personalization increases revenue by 40%, and dynamic testing optimizes strategies for better ROI.

AI isn’t just about automation; it’s about understanding your audience at a deeper level to drive better engagement and results.

AI Audience Insights Impact: Key Statistics and ROI Metrics

Predict Your Customers Behavior with AI

sbb-itb-a759a2a

Core AI Techniques for Audience Behavior Analysis

AI has the ability to transform raw data into meaningful strategies by uncovering audience patterns. Three key techniques - clustering, collaborative filtering, and decision trees - play a central role in this process. Each method serves a specific function, from grouping similar users to predicting preferences and mapping customer journeys. These tools are the foundation for generating actionable audience insights in real time.

Behavioral Segmentation with Clustering

Clustering relies on unsupervised machine learning to group audiences based on their behavior, without needing predefined labels. For example, instead of manually defining "high-value customers" as those spending over $500, clustering algorithms like K-Means automatically detect patterns in data such as engagement levels, purchase history, and content consumption.

Here’s how K-Means works: it calculates the distance between data points and cluster centers (called centroids), grouping users with similar behaviors. To ensure accuracy, data should be standardized (using z-score normalization) to prevent any single feature from overshadowing others. Tools like the Elbow Method and Silhouette Scores help determine the ideal number of clusters for your dataset.

For instance, a retail business using K-Means clustering managed to cut email volume by 60% while boosting conversion rates by 34%. Similarly, a B2B software company saw a 40% reduction in email volume and a 28% increase in trial-to-paid conversions after analyzing 12,000 trial users through behavioral clustering.

It’s important to balance statistical accuracy with practicality. Although eight clusters may be ideal statistically, managing eight separate strategies might not be feasible. Instead, focus on a manageable number of clusters and update them regularly - at least weekly - to reflect evolving user behavior. You can then create easy-to-understand personas for each cluster based on their average characteristics. For example:

| Cluster Persona | Behavioral Characteristics | Content/Marketing Strategy |

|---|---|---|

| Champions | High engagement, high revenue, recent activity | Exclusive offers, early access, loyalty rewards |

| Loyalists | Regular visitors, moderate spend, consistent engagement | Upsell/cross-sell, related product bundles |

| Browsers | High page views, low conversion, mobile-heavy | Social proof, limited-time offers, conversion nudges |

| Window Shoppers | Low engagement, no purchases, starting to lapse | Re-engagement campaigns, "We miss you" discounts |

| At-Risk/Churned | Inactive for 30+ days, no recent purchases | Heavy win-back discounts, new product announcements |

Predicting Preferences with Collaborative Filtering

Collaborative filtering (CF) predicts user preferences by analyzing patterns in how similar users interact with content. This technique powers recommendation systems, offering personalized suggestions based on shared behaviors. CF operates in two main ways: user-based CF (finding users with similar tastes) and item-based CF (identifying items that attract similar users).

Matrix factorization is often used in CF to map users and items into a latent space, predicting future interactions. In large-scale systems, CF acts as a quick filter, narrowing down billions of potential content options to a smaller, relevant set for each user.

A great example comes from January 2026, when Alibaba Group introduced a Personalized Item-to-Item (PI2I) collaborative filtering framework in Taobao’s "Guess You Like" section. By employing a two-stage retrieval process and an interactive scoring model, the system improved online transaction rates by 1.05%.

For content creators, CF offers a move away from static audience segments toward dynamic, predictive recommendations. Start with a broad range of options during the initial indexing phase, then refine these using more complex models during the ranking phase. Adding categorical data like topic tags or engagement history can also enhance CF’s accuracy and scalability.

Journey Mapping with Decision Trees

Decision trees are a powerful way to map customer journeys by identifying critical decision points. They work by splitting data into branches based on user behaviors, such as whether someone watched a video for more than 30 seconds or clicked on a follow-up article. This creates a flowchart that visually represents user navigation paths.

What sets decision trees apart from clustering or CF is their ability to explain why users take certain actions. They reveal the sequence of behaviors that lead to conversions, making them invaluable for identifying which content types or engagement metrics are most effective. Their clear, rule-based structure is particularly useful for guiding A/B testing and pinpointing where personalized interventions can have the most impact.

Data Preparation for AI-Driven Audience Insights

AI can only deliver accurate insights if the data feeding it is clean and well-structured. It's no surprise that data scientists spend 50–80% of their time on preparation and cleaning. Without this foundation, AI projects often fail - 70% of them, in fact, due to poor data quality. The process of transforming raw data into actionable insights is what separates success from wasted effort. It all hinges on how you collect, clean, and safeguard your audience data.

Data Collection Best Practices

The digital marketing world changed dramatically in 2026 with the end of third-party cookies. Today, 78% of marketers focus on first-party data - information collected directly from sources like websites, email lists, and customer interactions. Zero-party data, such as survey responses or user preferences, is just as valuable because it reflects what users explicitly share, rather than inferred behavior.

Start by defining your data needs. For example, if you're segmenting email subscribers with K-Means clustering, you'll need metrics like open rates, click-through rates, and purchase history. Building a recommendation system? Then focus on interaction data, such as content consumed, time spent, and skipped sections.

Set up audience segments in tools like GA4 as early as possible. These platforms typically don’t backfill historical data, so starting early ensures you're capturing the right information moving forward. A layered data architecture works best. Use tools like Segment for data collection, Snowflake for storage, and dbt for cleaning and modeling. This setup prevents issues like fragmented or inconsistent data.

Diversify your methods by combining structured data from API calls (e.g., social media metrics or email stats) with internal databases like CRM records. For scenarios where real-world data is unavailable - such as rare user behaviors - synthetic data generated through simulations can fill those gaps.

Once you've gathered your data, focus on standardizing and consolidating it to ensure its quality.

Cleaning and Organizing Data

Raw data is messy. In fact, 63% of analytics projects fail due to poor data quality - things like duplicate records or inconsistent formats. Cleaning this data involves standardizing, deduplicating, and validating it.

Start with clear naming conventions and merge similar events. For instance, unify terms like "played song" and "song played" to avoid confusion. Rename technical codes (e.g., catSelectClick) into human-readable labels like "Category Selected" so AI systems can better interpret their meaning.

Handle missing data carefully. Techniques like mean or median imputation can fill gaps, while flagging or removing rows with excessive missing values ensures accuracy. For dates, stick to a consistent format like MM/DD/YYYY, and standardize financial symbols to the dollar sign ($) for smooth analysis.

"The first step to training a neural net is to not touch any neural net code at all and instead begin by thoroughly inspecting your data. This advice gets ignored constantly."

- Andrej Karpathy, former Director of AI at Tesla

If you're preparing text for AI using advanced AI tools for blog content creation, convert raw HTML into Markdown. This reduces token counts by 50–60% while preserving structure like headers and lists. Use lookup tables to map coded values (e.g., sku_29881) to clear labels like "Women's Running Shoe" for better context.

Automate validation to catch errors early. For example, flag negative numbers in price fields, identify outliers, and regularly audit your dataset. Poor data quality costs the U.S. economy an estimated $3.1 trillion annually.

Data Privacy and Compliance Requirements

As of January 1, 2026, 20 U.S. states have enacted privacy laws, covering about 43% of the population. States like Indiana, Kentucky, and Rhode Island joined the list that day, while California's Delete Act will soon require data brokers to honor deletion requests through a centralized system starting in August 2026.

Unlike the EU's opt-in system, most U.S. states use an opt-out model, where tracking is allowed unless users explicitly opt out. Some states now mandate honoring Global Privacy Control (GPC) signals - browser settings that automatically opt users out of data selling or sharing.

Sensitive Personal Information (SPI) - like geolocation, race, religion, health data, or sexual orientation - requires extra care under laws like California's CPRA. Explicit consent is often required for collecting SPI, so audit your AI training sets to ensure compliance.

Deploy a Consent Management Platform to block non-essential tracking scripts until users give permission. For analytics, consider cookieless platforms like Plausible or Fathom Analytics.

"The hype around AI and large language models has driven companies to think more carefully about control and ownership of their data. First-party data has become the strategic asset that powers competitive advantage in an AI-driven marketing landscape."

- Andrew Frank, Analyst, Gartner

Server-side tracking can help maintain data quality while respecting privacy preferences before data reaches third-party platforms. Additionally, document specific retention schedules for personal information. Broad terms like "as long as necessary" no longer meet compliance standards under CPRA. Non-compliance can be costly, with fines in California reaching $2,664 per unintentional violation and $7,988 for intentional ones in 2025.

These steps are essential for maintaining privacy and ensuring your data is ready for AI-driven insights.

Top AI Tools for Audience Behavior Analysis

Once your data is organized and ready, the AI Blog Generator Directory can help you turn that raw information into meaningful audience insights. This platform goes beyond simple blog creation by combining audience analytics with AI-driven tools to refine behavioral analysis and enhance content strategies.

AI Blog Generator Directory

The AI Blog Generator Directory (https://aibloggenerators.com) serves as a one-stop hub for leveraging AI in content creation and audience analysis. Its standout features include automated keyword research, integrated analytics, and multilingual support, which are all crucial for pinpointing topics that resonate with your audience and understanding how they interact with your content.

The platform also supports CMS integration, allowing you to seamlessly incorporate audience insights into your content creation workflow. If you need to present your findings visually, the directory provides tools for crafting presentations and generating text-to-image content. Additionally, its multilingual capabilities make it easier to analyze and optimize content for audiences across various regions and demographics.

Up next, explore how to weave these AI-powered insights into your content strategy to create a bigger impact.

Implementing AI Insights into Content Strategies

Creating Targeted Content with AI Insights

Turning audience behavior data into meaningful content is no small task. AI simplifies this by enabling automated segmentation and dynamic segmentation that evolves in real-time, moving beyond basic demographics like age or location. Instead, it allows you to group audiences based on factors like how often they make purchases, how they engage with your content, or their current browsing habits.

Tools powered by Natural Language Generation (NLG) take personalization to the next level. They can adjust tone, length, and structure for each audience segment while staying true to your brand’s voice. For instance, Narrato implemented an AI writing assistant with customizable templates, transforming customer notes into polished, brand-aligned case studies. The result? An 85% reduction in content creation time and a threefold increase in output. It’s no wonder that 77% of marketers say generative AI enhances personalization, and 96% report that personalization drives repeat purchases.

Another layer of personalization comes from zero-party data, such as information collected through quizzes, polls, or preference centers. This privacy-conscious approach can improve personalization effectiveness by 40%. Progressive profiling - gathering small bits of data over time, like an email address first, followed by interests or company size - helps build detailed audience profiles while minimizing friction.

Once your content is personalized, dynamic testing can help refine these strategies even further.

A/B Testing Audience Strategies

Traditional A/B testing often feels static, comparing just two variations. AI takes it up a notch with dynamic, multi-variable testing that adapts in real time. Multi-Armed Bandit (MAB) algorithms, for example, automatically shift more traffic toward better-performing variations as the data rolls in. This approach has delivered impressive results, such as a 340% increase in click-through rates and a 275% boost in email revenue.

"Since we build rapid prototypes quite often, using AI has helped us code A/B tests faster and without bugs."

- Jon MacDonald, CEO of The Good

For reliable results, run your tests for at least seven days to account for daily audience behavior changes. Aim for 1,000 to 2,000 impressions per variation and ensure a p-value below 0.05 with a confidence level above 95%. To replicate success, document the specific AI prompts, models, and parameters that led to favorable outcomes.

Once your testing framework is solid, the next step is measuring how these strategies impact revenue and engagement.

Measuring Success and ROI

To gauge the effectiveness of AI-driven strategies, focus on engagement quality metrics like average view duration, scroll depth, and repeat plays - these help reveal how well your content connects with your audience. Financial metrics, such as Customer Lifetime Value (CLV) and Customer Acquisition Cost (CAC), provide a clearer picture of the overall impact. Among companies using generative AI in marketing, 66% reported revenue growth within 12 months, with 8% seeing gains of more than 10%.

Take Juniper Networks, for example. Jean English used AI-powered strategies to generate five times more meetings, while AvDerm AI achieved a 65% increase in referral traffic by integrating CRM data for full-funnel tracking.

Set specific goals - like increasing email-driven revenue or boosting newsletter sign-ups from targeted segments. If a piece of content has high time-on-page but low conversions, consider repositioning your call-to-action or simplifying forms to reduce friction. Analyze content that maintains steady traffic over 6 to 12 months and think about repurposing it into fresh formats.

Conclusion

Key Takeaways

This guide has explored how AI techniques and data strategies are reshaping the way content creators understand and engage with their audiences. Instead of relying on guesswork or simple demographic data, these modern tools allow you to predict what your audience wants and strategically plan for their future behaviors.

The results speak for themselves: companies using AI-driven personalization see a 5–8× return on marketing spend, while brands that integrate audience analytics into their campaigns achieve 3.5× higher ROI. In a world where personalized interactions are no longer optional, leveraging AI insights is essential. Failing to do so can mean missed opportunities and lower returns. These numbers highlight the importance of the data-driven strategies discussed throughout this guide and reinforce AI's pivotal role in today’s content strategies.

Start by focusing on one high-impact data source - like customer reviews or social media comments - to uncover the motivations behind audience behavior. Use tools, such as those from the AI Blog Generator Directory, to handle repetitive tasks like keyword research and content optimization. This will free up your time to focus on creative and strategic initiatives. Another effective tactic is progressive profiling, which collects audience data incrementally and can improve personalization efforts by 40%. These approaches allow you to fine-tune your content with greater accuracy.

"AI is not just a tool - it's your partner in creating content that connects, engages, and inspires." - QuickCreator

FAQs

What data do I need to start AI audience behavior analysis?

To kick off AI-driven audience behavior analysis, start by gathering key data. This includes demographics like age, gender, and location, as well as behavioral patterns such as page views, time spent engaging with content, and click paths. Add to this psychographics, which cover interests, preferences, and sentiment.

Make sure to track real-time engagement metrics, content performance, and user journey details. Prioritize first-party data to stay privacy-compliant while focusing on behavioral signals. AI tools can analyze unstructured language and emotional cues to uncover deeper insights into what drives your audience, what challenges they face, and what they intend to do next. This approach helps you understand motivations, frustrations, and intent more effectively.

How do I choose between clustering, collaborative filtering, and decision trees?

When deciding which method to use, it all comes down to your goals and the data you have.

- Clustering helps group audiences into segments, making it easier to develop targeted strategies.

- Collaborative filtering focuses on recommending personalized content or products by analyzing user behavior.

- Decision trees are great for classifying or predicting audience attributes, helping you identify key factors.

In short, use clustering for audience segmentation, collaborative filtering for personalized recommendations, and decision trees for classification or prediction tasks.

How can I use AI insights while staying compliant with U.S. privacy laws?

To align with U.S. privacy laws while leveraging AI insights, focus on using consented, first-party data and implement privacy-safe techniques like server-side tagging and hashed identifiers. It's also crucial to prioritize transparency and adhere to disclosure guidelines set by agencies like the FTC and FCC. By following these steps, you can stay compliant while safeguarding user privacy.