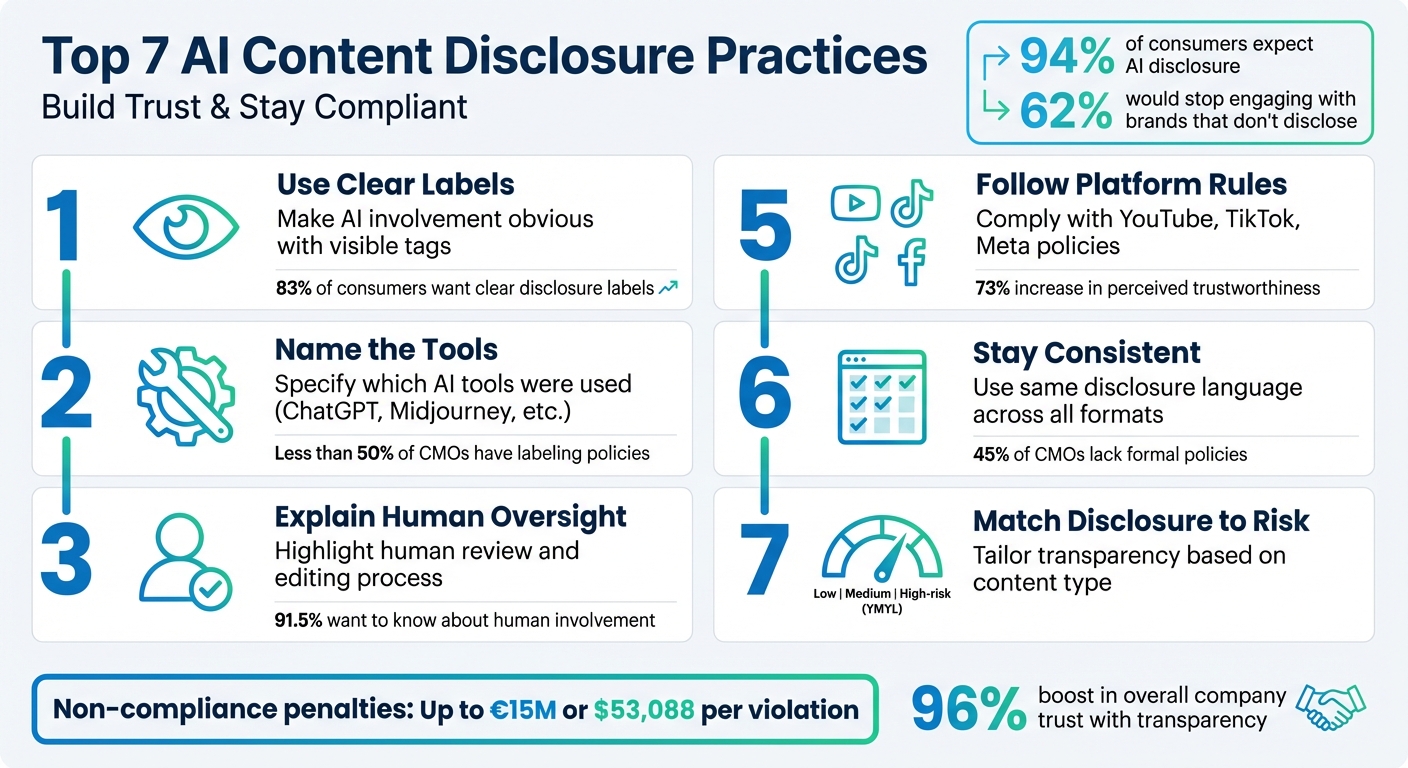

AI content disclosure is no longer optional - it’s a necessity. With regulations tightening and consumer trust on the line, clear labeling of AI-generated content is critical. Here's what you need to know:

- Use Clear Labels: Make AI involvement obvious with visible, straightforward tags.

- Name the Tools: Specify which top AI tools for writing were used (e.g., ChatGPT, Midjourney).

- Explain Human Oversight: Highlight how humans reviewed and edited the content.

- Add Metadata: Embed machine-readable tags to maintain transparency across platforms.

- Follow Platform Rules: Comply with disclosure policies on platforms like YouTube, TikTok, and Meta.

- Stay Consistent: Use the same disclosure language across all formats.

- Match Disclosure to Risk: Tailor transparency based on content type and potential impact.

Why it matters: Non-compliance with laws like the EU AI Act or FTC guidelines can lead to fines up to $53,088 per violation. Beyond legal risks, 62% of people say they’d stop engaging with brands that fail to disclose AI use. These practices help you stay compliant, build trust, and meet audience expectations.

7 Essential AI Content Disclosure Practices for Compliance and Trust

Understanding AI Disclosures: Why, When, and How to Disclose AI Use

sbb-itb-a759a2a

1. Use Clear, Visible Labels

When it comes to AI disclosure, the golden rule is to keep it obvious. Transparency matters - a whopping 83% of consumers believe AI-generated content should include clear disclosure labels. Trying to bury this information in footers or fine print? That’s a fast track to losing trust.

Your labels should clearly outline AI's role in the content creation process, often facilitated by online content writing tools. For example, you could use phrases like "AI-assisted research synthesis," "Image generated by AI," or "Initial draft by AI; human-edited for accuracy". These descriptions leave no room for doubt.

Placement is just as important as clarity. Make sure labels are visible - include them inline with text or as badges on media. Avoid relying on footer-only disclosures; they’re easy to miss and often dismissed as unimportant. As Dipti Padalkar, an AI SEO Specialist, explains:

"Disclosure is a trust signal, not a ranking requirement".

Failing to meet transparency standards can have real consequences. Under the EU AI Act, starting August 2, 2026, companies could face penalties of up to €15 million or 3% of their global turnover for non-compliance. On the flip side, embracing transparency can pay off. AI advertising with clear disclosures has been shown to boost perceived trustworthiness by 73% and overall company trust by 96%.

Finally, always ensure a human byline accompanies the content. Avoid attributing authorship to "AI" - a human should always be accountable for the accuracy and quality of the content.

2. Name the AI Tools You Used

Using generic phrases like "AI-generated content" is no longer enough. To establish trust, it's important to name the specific tools you used, a practice often referred to as "contextual transparency." This approach gives your audience a clear picture of how AI factored into the creation process. For instance, 94% of consumers expect businesses to disclose their use of AI technology, yet less than half of CMOs using generative AI have clear labeling policies in place. Being specific - whether you used ChatGPT for research, Midjourney for creating images, or a specialized tool for outlining - shows intentional oversight and builds trust.

Organizations like Science and Elsevier now require authors to disclose the exact AI tools and their version numbers in their methodology or acknowledgments sections. This level of detail matters because AI outputs can differ significantly between versions. For example, GPT-4 produces different results compared to GPT-3.5. Science Journals’ editorial policies emphasize this by stating:

"The full prompt used in the production of the work, as well as the AI tool and its version, should be disclosed."

Once you've identified the tools, it's equally important to credit them properly. Resources like the AI Blog Generator Directory can guide you in recognizing and crediting the tools used in your workflow. Instead of vague wording, opt for clear statements like "Generated by [Tool Name] with human review" or "AI tools assisted with research synthesis and outlining".

Transparency is not just good practice - it’s becoming a legal requirement. Under the EU AI Act, failing to disclose AI use could result in fines of up to €30 million or 6% of global turnover. Beyond legal risks, transparency helps maintain consumer trust. For example, 62% of Europeans say they would unfollow a brand that fails to disclose its AI usage. By naming the tools you use, you not only ensure compliance but also strengthen your relationship with your audience.

3. Explain Human Review and Editing

Mentioning the essential AI text creators you used is only part of the equation. Your audience also wants clarity on what role humans played in reviewing, editing, and approving the content. According to research, 91.5% of news consumers believe it's crucial to know if and how humans were involved in content review before publication. Even more compelling, 94.2% of people want to understand the steps journalists take to uphold ethics and accuracy when working with AI.

Simply disclosing AI usage without context can lead to skepticism. A study conducted between 2024 and 2025 by Dr. Benjamin Toff and the Trusting News organization highlights this. Researchers collaborated with 10 newsrooms, including Swiss Info, USA TODAY, and inewsource, to test how audiences react to AI disclosures. They found that 42% of readers initially distrusted stories labeled as AI-generated. However, detailed explanations of human oversight, such as verification processes, significantly reduced this distrust. For instance, USA TODAY added an "i" icon to its "Key Points" summaries. When clicked, it revealed a clear explanation of the human-led verification process, helping to restore audience trust.

Vague statements about human involvement don’t cut it. Be explicit. Use language like: "AI tools assisted with drafting and structuring; all claims were reviewed and edited by a human editor for accuracy". This reassures readers that a human has validated the information. As Dipti Padalkar, creator of Trusted AI SEO, explains:

"The goal isn't to prove you used AI responsibly. It's to make readers feel confident that someone responsible is still in charge".

Tailor the level of disclosure to the content's risk level. For high-stakes topics - such as health, finance, or legal advice - offer a detailed breakdown of how humans verified facts, checked sources, and ensured ethical standards. For lower-risk content, a simpler note acknowledging human review may suffice.

To determine which AI tools require more extensive human oversight, resources like the AI Blog Generator Directory can be helpful. These tools provide insights into the reliability of various AI outputs.

Finally, keep attribution focused on human authors. Avoid labeling any part of the content as "AI-created", as this can erode trust and shift accountability away from humans. Instead, include a disclosure note near the content, clearly outlining the AI's role and emphasizing human oversight.

4. Add Machine-Readable Metadata

Clear visual labels are a great start, but adding machine-readable metadata takes transparency to another level. This type of metadata ensures that automated systems can identify AI-generated content, creating a dual layer of disclosure for both human viewers and digital tools. Unlike visual labels, which can be altered or removed when content is shared, metadata stays embedded in the file itself. This makes it a reliable way to maintain disclosure across platforms and devices. Together, visual labels and metadata form a powerful combination for ensuring transparency.

The Coalition for Content Provenance and Authenticity (C2PA) has set the gold standard for managing high-stakes content. Major tech companies rely on C2PA’s digitally signed credentials and the AI-Disclosure HTTP Header to embed and share details about AI involvement. These credentials provide a secure, tamper-proof record of how content was created and modified. For example, the header uses terms like ai-originated, ai-modified, or machine-generated to give crawlers and bots clear information about AI usage, helping AI tools to boost SEO content structure maintain visibility. These tools not only protect content integrity but deliver measurable outcomes.

A Google update in March 2024 reduced low-quality AI content by about 45%. At the same time, structured metadata increased the likelihood of AI content being cited by 40%. Additionally, visitors referred by AI platforms like ChatGPT showed a conversion rate of 11.4%, compared to just 5.3% from traditional organic search.

For images and videos, consider applying IPTC Photo Metadata standards by setting the "Digital Source Type" field to "Trained Algorithmic Media" or "Composite Synthetic". Research from Mozilla highlights the effectiveness of machine-readable methods, giving them a "Fair" rating, which outperformed human-facing visual labels rated as "Low". However, as Ramak Molavi Vasse’i, Mozilla’s Research Lead for AI Transparency, notes:

"Current watermarking and labeling technologies show promise and ingenuity, particularly when used together. Still, they're not enough to effectively counter the dangers of undisclosed synthetic content".

One critical step is ensuring your content management system retains metadata during distribution. Many platforms automatically remove C2PA manifests or IPTC data when files are uploaded or resized. To maintain transparency, make sure your publishing workflow preserves metadata from creation through to final distribution.

5. Follow Each Platform's Rules

While internal policies are essential for transparency, aligning with each platform's specific rules is just as important. Ignoring these guidelines can expose your content to compliance risks. For instance, Meta (Facebook/Instagram) requires disclosure for political, electoral, or social issue ads featuring realistic AI-generated individuals or scenarios. Starting February 2025, Meta will automatically tag media created with its generative AI tools with an "AI info" label. On YouTube, creators must check the "Altered or synthetic content" box in Creator Studio for media that could be mistaken for real-life people, places, or events. TikTok enforces disclosure for "significantly" AI-modified or generated content, requiring users to either apply the AIGC (AI-Generated Content) label or include visible watermarks.

Each platform enforces these rules differently. For example, YouTube provides a 7-day warning before suspending creators from its Partner Program for repeated disclosure failures. Medium limits undisclosed AI content to "Network Only" distribution, which excludes it from broader discovery and paywall participation. Vimeo mandates disclosure for realistic videos depicting people saying or doing things they didn’t actually do; violations can result in forced labeling or even account termination. Following these rules not only ensures compliance but also builds trust with your audience.

Data supports the importance of transparency. Proper disclosures can increase perceived trust by 73% and overall company trust by 96%. Elizabeth Herbst-Brady, Chief Revenue Officer at Yahoo, highlighted this point:

"Transparency will be vital for brands to maintain long-term consumer relationships and generate positive brand equity".

To maintain consistency, use native platform labeling tools during uploads to reduce manual errors. For platforms without automatic labeling, add clear on-screen disclaimers, such as "Created with AI", that remain visible throughout the video. Only label content when required - there’s no need to tag animations or beauty filters that are typically exempt. Lastly, maintain a log of the AI tools used for each project. This documentation can be invaluable if regulatory audits arise from the FTC or EU authorities.

6. Keep Disclosures Consistent

Ensuring consistent disclosure across blogs, videos, social media, and newsletters is essential to maintaining clarity about AI usage. When labels vary across platforms, it can create skepticism and confusion. This inconsistency risks eroding trust with your audience.

Consistency aligns with earlier recommendations about visible labeling. According to a Gartner survey, 45% of CMOs are currently using generative AI, but fewer than half have formal policies for labeling AI-generated content. Michael Andrews, a Content Strategist at Kontent.ai, emphasizes:

"Transparency plays an important indirect role in regulating trust and the perception of performance".

Real-world examples show how inconsistent disclosures can harm credibility. In November 2023, Sports Illustrated faced public backlash after using fictitious author names and AI-generated headshots without uniform disclosure. Similarly, CNET dealt with unionization efforts after publishing AI-generated articles without clear, consistent transparency across their platforms.

To avoid these pitfalls, establish a single, organization-wide policy that defines when and how to disclose AI use, regardless of the advanced AI tools or platforms used. Use standardized phrases like "AI-assisted" or "Generated by AI" consistently across all formats. For instance, if you label a video as AI-generated on YouTube, ensure the same label appears when sharing the content on TikTok or Instagram. This consistent approach not only helps meet compliance standards but also demonstrates a commitment to transparency.

For practical application, consider implementing a headless CMS to enforce mandatory disclosure fields across all content types. This ensures both visible labels for users and machine-readable metadata for automated systems are applied consistently. Additionally, adopt naming conventions in your Digital Asset Management system to flag AI-assisted assets before they are published.

As Dipti Padalkar aptly states:

"Consistency matters more than volume".

7. Match Disclosure Level to Content Risk

When evaluating AI's role in content creation, it's important to consider how it impacts authenticity, identity, or representation. This is where the Materiality Test comes into play. For example, the stakes are very different between a spell-checked blog post and a deepfake video. The level of disclosure should reflect the potential risk tied to the content.

Building on earlier transparency practices, disclosure should align with the content's risk category. Here’s a breakdown of these categories:

- Low-risk content: Routine tasks like formatting, color correction, or scheduling. These rarely require public disclosure.

- Medium-risk content: AI-drafted material that has been thoroughly reviewed and edited by humans, such as marketing blogs or product descriptions. A brief disclosure may be appropriate here.

- High-risk content: Synthetic media (like AI-generated voiceovers or digital avatars) and sensitive topics, often referred to as YMYL (Your Money or Your Life). This includes areas like health advice, financial guidance, or investigative reporting. These require explicit and detailed disclosure.

Failing to comply with transparency regulations can lead to significant penalties. For instance, the EU AI Act imposes fines of up to €15 million or 3% of annual turnover. Similarly, the FTC can levy penalties of up to $53,088 per violation, with additional state-specific requirements also coming into play.

Transparent disclosure not only helps with compliance but also builds consumer trust. The IAB AI Transparency and Disclosure Framework reinforces this idea:

"Disclosure is required when AI materially affects authenticity, identity, or representation in ways that could mislead consumers".

To maintain both compliance and trust, tailor your disclosure practices to the level of risk. For high-risk content like news articles or medical advice, use detailed, per-asset labels and explain the human oversight involved. Medium-risk content might only need a brief author bio or a page-level note. Low-risk tasks, while not requiring public disclosure, should be documented internally for audit purposes. By aligning disclosure with content risk, you enhance transparency and safeguard consumer confidence.

Conclusion

These seven practices do more than just satisfy regulatory requirements - they help build trust that lasts. Transparency in AI advertising isn't just a box to check; it has real impact. For example, transparent AI advertising has been shown to create a 73% increase in perceived trustworthiness and a 96% boost in overall company trust. As Master The Monster (MTM) puts it:

"The brands gaining ground aren't waiting for enforcement - they're labeling proactively and converting transparency into measurable trust".

By integrating these practices, you're aligning your content strategy with both legal compliance and what your audience expects. Staying ahead means keeping up with regulatory updates from the FTC, following platform-specific guidelines from YouTube, Meta, and TikTok, and engaging with frameworks like the IAB's AI Transparency and Disclosure Framework, introduced in January 2026. You might also explore using the C2PA metadata standard to ensure your transparency efforts stay intact as your content moves across platforms.

Take the time to audit your use of AI blog generators and establish clear internal policies. Keep records of your prompts, document the AI tools you rely on, and build disclosure checkpoints into your production process. Treating transparency as an integral step - not an afterthought - can make all the difference. Tools like the AI Blog Generator Directory (https://aibloggenerators.com) can guide you in identifying which features need disclosure, helping you implement these strategies effectively. This approach ensures you stay compliant, maintain credibility, and continue to earn your audience’s trust in a landscape where regulations and expectations are constantly evolving.

FAQs

What counts as “AI-assisted” vs “AI-generated”?

AI-assisted content involves substantial human participation, where AI tools are used to aid or enhance the creation process. In contrast, AI-generated content is primarily or entirely produced by AI systems, often with little to no human involvement. The distinction comes down to how much human oversight and input are involved during the creation process.

Where should I place AI disclosures so people actually see them?

Make sure AI disclosures are placed where readers will naturally notice them - like at the beginning of the content, close to the title, or within the main text near AI-generated sections. For added clarity, use visible labels or badges directly on AI-generated elements, such as next to images or specific text areas.

Avoid burying disclosures in hard-to-find spots. The goal is to make them obvious and impossible to miss, helping to build transparency and trust with your audience.

How do I add AI disclosure metadata that stays when content is shared?

To make sure AI disclosure metadata stays intact when content is shared, embed it directly into the content itself. This can be done by including metadata in HTML code, using specific tags, or adding it to document properties. These methods allow the AI origin to remain identifiable across various platforms. By using standardized tags or creating custom fields, you can ensure that disclosures remain visible, even when the content is reused or distributed in different formats.