Online content is in a trust crisis. By 2026, 90% of online material is projected to be AI-generated, often created using AI blog generators, with 62% of it being fake. Deepfake incidents skyrocketed by 900% between 2023 and 2025, causing real-world losses - like a $25M fraud case in 2024. This rising deception demands reliable content verification systems.

The solution? AI and blockchain working together. AI detects tampering through advanced analysis, similar to how AI writer tools analyze text for quality, while blockchain provides tamper-proof timestamps and ownership records. This combination ensures digital assets can be traced back to their origin, offering a safeguard against fraud.

Key insights:

- AI tools like perceptual hashing detect edits, while blockchain secures data with cryptographic hashes.

- Blockchain timestamps are now legally recognized in copyright disputes by WIPO.

- Standards like C2PA embed creator info and history directly into content metadata.

Challenges remain - scaling systems, privacy risks, and technical complexity - but hybrid approaches combining AI and blockchain are setting new benchmarks for verifying content authenticity. With fraud losses expected to hit $40B by 2027, these systems are becoming essential for digital trust.

Verifiable Trust in an AI World: Provenance, C2PA & Blockchain | Module 4.1

sbb-itb-a759a2a

How AI and Blockchain Work Together for Content Provenance

AI helps detect content tampering, while blockchain securely records creation timestamps and ownership. Together, they form a system for verifying content that's both intelligent and legally reliable.

AI's Role in Content Creation and Analysis

AI plays a key role in creating and validating digital media, ensuring its authenticity. Advanced neural networks, trained on millions of images, can spot subtle inconsistencies in lighting, shadows, or compression patterns - clues that indicate whether media was created by a generative adversarial network (GAN) or captured by a real camera. For text, AI tools assess perplexity (predictability of the writing) and burstiness (variation in sentence structure) to differentiate between human and machine-generated content.

Another powerful tool is perceptual hashing (pHash), which generates a 64-bit fingerprint based on an image's dominant visual features. Unlike traditional cryptographic hashes that change entirely with a single pixel modification, pHashes remain stable through minor edits like resizing or brightness adjustments. This makes them particularly useful for tracking content online, ensuring its origin and authenticity. For instance, in November 2025, a Hyderabad-based digital marketing agency used AI watermarking combined with blockchain registration on the Polygon network to detect 287 unauthorized uses of their content within 90 days. This effort recovered $47,000 through automated settlements, costing just $0.01 per piece, with a detection accuracy of 94%.

AI also embeds invisible markers directly into content during its creation. For images, this involves altering pixel patterns in frequency domains, while for text, it subtly adjusts word choice and sentence structure. A practical example is Microsoft's Video Authenticator, which analyzes frame consistency and temporal patterns to detect manipulated videos, achieving about 85% accuracy.

Blockchain's Contribution to Transparency

Blockchain adds a layer of transparency that AI alone cannot provide: a permanent, decentralized record of timestamps and ownership. When content is created, its cryptographic hash is stored on a blockchain, creating an unalterable audit trail that proves a specific version existed at a given time.

"Blockchain provides immutable timestamps and ownership records, while AI analyzes metadata, pixel patterns, and compression artifacts to detect tampering." - ReelMind

This approach has already been adopted by organizations like the Associated Press. In 2024, they began using blockchain to timestamp photojournalism submissions before publication, ensuring the integrity of visual assets. Similarly, Numbers Protocol developed a "Capture" system that bundles an asset's cryptographic hash, timestamp, and creator's identity into a Proof-of-Existence (PoE) container, making the content traceable and verifiable.

Given the sheer volume of AI-generated content - estimated at 90 billion pieces globally in 2024 - systems rely on Merkle trees to group multiple hashes into a single root hash. This approach keeps verification costs low while maintaining security. Platforms increasingly use Layer-2 networks like Polygon and Solana, where registration costs drop to less than $0.01 per piece. These blockchain records carry legal weight, as the World Intellectual Property Organization (WIPO) now recognizes blockchain timestamps as valid evidence in copyright disputes across 193 member countries.

These combined efforts of AI and blockchain are setting a new standard for ensuring content authenticity and provenance.

Recent Research on Blockchain-Backed Provenance

From early 2025 to early 2026, research has highlighted how blockchain-supported systems are verifying content authenticity on a large scale. These systems use a mix of perceptual hashing and decentralized ledgers to create tamper-resistant records, even when minor changes are made to the content.

Perceptual Hashing and Registry-Based Verification

In February 2026, researchers Apoorv Mohit, Bhavya Aggarwal, and Chinmay Gondhalekar from S&P Global introduced a framework that combines a Merkle Patricia Trie (MPT) on a public blockchain with a Burkhard–Keller (BK) tree for similarity searches. This system registers perceptual hashes at the point of content creation and identifies AI-generated images by calculating a similarity score based on Hamming distance. When tested on a dataset of 1 million registered hashes, the system reduced the search to around 2,055 candidates by examining only 137 "buckets", accounting for 1–2 bit flips.

"The proposed system does not aim to universally detect all synthetic images, but instead focuses on verifying the provenance of AI-generated content that has been registered at creation time."

– Apoorv Mohit, Market Intelligence, S&P Global

Additionally, a study published in March 2025 unveiled the Proteus Framework, developed by researchers from Zellic and MIT. This framework integrates "DinoHash", derived from the DINOv2 neural network, with Multi-Party Fully Homomorphic Encryption (MP-FHE). DinoHash enhanced bit accuracy by 12%, and when paired with advanced AI detection models, improved the classification accuracy of synthetic images by 25%. These technical advancements are further bolstered by secure credential standards that strengthen content verification.

Content Credential Standards and Metadata

Beyond technical improvements, industry standards have evolved to embed provenance data directly into content metadata. The Coalition for Content Provenance and Authenticity (C2PA) has emerged as the leading standard for cryptographically signed metadata. By early 2026, the C2PA network had grown to over 6,000 members and affiliates. This standard consolidates timestamps, creator identity, and edit history into a single manifest that accompanies the content.

In January 2026, John Collomosse from Adobe Research introduced the "Three Pillars of Provenance" and launched TrustMark, an open-source invisible watermarking algorithm designed to endure social media processing. Microsoft's InvisMark, also evaluated in January 2026, achieved a Peak Signal-to-Noise Ratio (PSNR) of approximately 51 and retained over 97% bit accuracy under various image manipulations like JPEG compression and Gaussian blur. These watermarks embed 256-bit payloads with error correction, ensuring unique identifiers remain intact.

"Using a C2PA provenance manifest for media created and signed in a high security environment enables high-confidence validation."

– Eric Horvitz, Chief Scientific Officer, Microsoft

In early 2026, Mintall introduced "Mintall Keep", a tool that automatically generates C2PA content credentials and embeds blockchain ownership information directly into product images. This tool also includes creation history in a permanent blockchain record, offering a robust solution for DMCA takedown disputes. These developments illustrate the growing synergy between AI and blockchain technologies, setting new benchmarks for ensuring content authenticity.

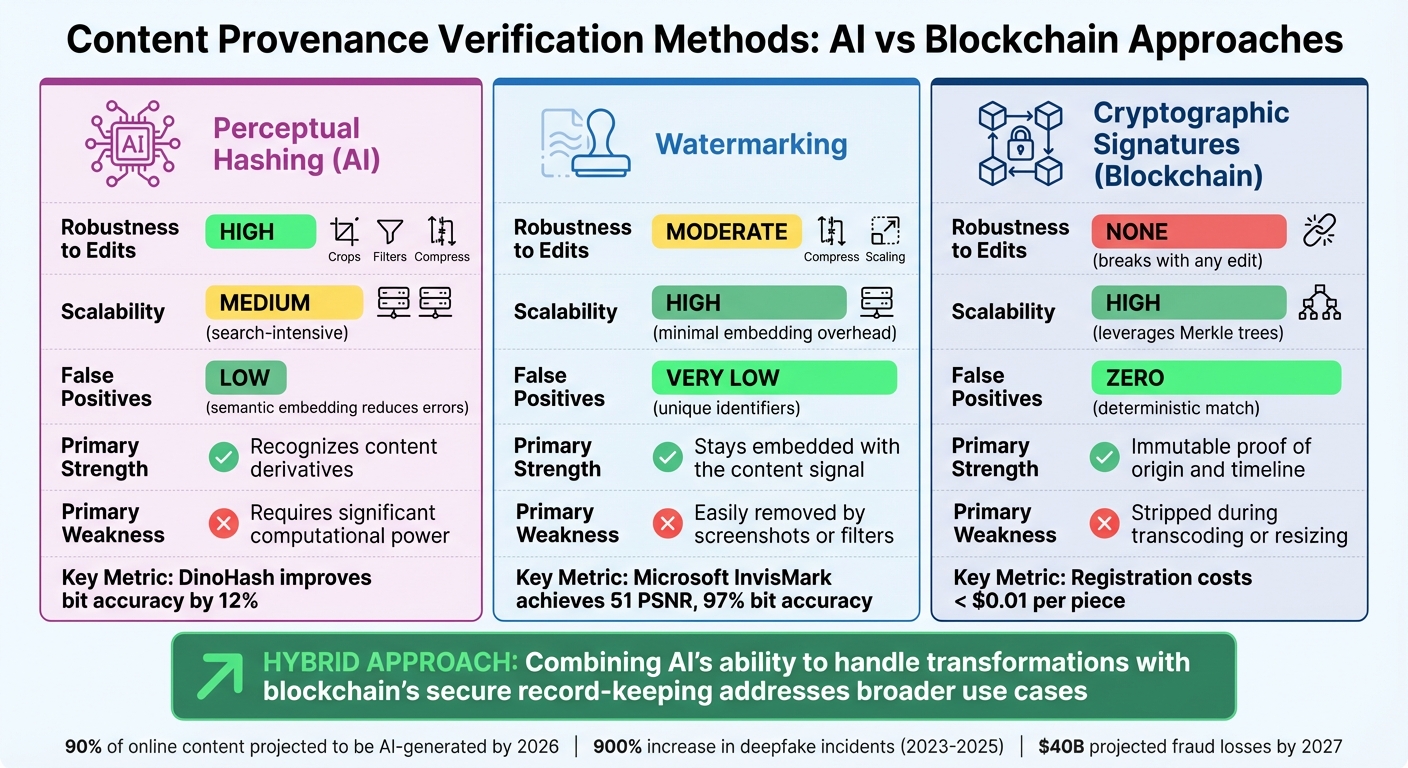

Comparison of Provenance Verification Methods

AI and Blockchain Content Verification Methods Comparison

By combining AI's detection abilities with blockchain's unchangeable records, different methods and AI content generator tools offer unique ways to verify content provenance. Each approach has its own trade-offs when it comes to handling altered content, scaling to large datasets, and minimizing false matches. For example, blockchain-backed cryptographic signatures provide mathematical certainty but fail completely if even a single pixel is altered. On the other hand, AI-based perceptual hashing can handle changes like cropping or color tweaks but requires more computational resources. Meanwhile, watermarking holds up against compression but can be removed by social media platforms. The table below highlights these trade-offs for a clearer understanding.

Choosing the right method depends heavily on your specific needs. If you're looking for absolute proof of origin for untouched files, blockchain-anchored cryptographic signatures are ideal, offering zero false positives and unalterable timestamps. However, as Nikhil John from InCyan points out, a watermark alone doesn't provide details about when it was embedded, who did it, or its legal context. This is why hybrid models are gaining popularity - they combine AI's ability to handle transformations with blockchain's secure record-keeping.

Recent research supports this trend. For instance, AI-enhanced perceptual hashing, like DinoHash, improves bit accuracy by 12% compared to traditional watermarking. Similarly, Microsoft's InvisMark achieves a PSNR of approximately 51 and over 97% bit accuracy, even under conditions like compression and blur.

Comparison Table of Verification Methods

| Method | Robustness to Edits | Scalability | False Positives | Primary Strength | Primary Weakness |

|---|---|---|---|---|---|

| Perceptual Hashing (AI) | High (handles crops, filters, compression) | Medium (search-intensive) | Low (semantic embedding reduces errors) | Recognizes content derivatives | Requires significant computational power |

| Watermarking | Moderate (survives compression, some scaling) | High (minimal embedding overhead) | Very Low (unique identifiers) | Stays embedded with the content signal | Easily removed by screenshots or filters |

| Cryptographic Signatures (Blockchain) | None (breaks with any edit) | High (leverages Merkle trees) | Zero (deterministic match) | Immutable proof of origin and timeline | Stripped during transcoding or resizing |

This comparison makes one thing clear: no single method can address every challenge. Cryptographic signatures excel at proving "who" and "when", but even minor edits render them useless. Perceptual hashing is robust against real-world changes but demands more processing power. Watermarking travels with the content but is vulnerable to removal. The future likely lies in hybrid approaches, where AI detects altered content while blockchain ensures a tamper-proof custody trail. By combining their strengths, these methods can address a broader range of use cases effectively.

Challenges and Future Directions

AI and blockchain integration holds great potential, but several hurdles need to be addressed before it can be widely implemented.

Scalability is a major challenge. Provenance data often far exceeds the size of the original content, placing immense strain on networks and storage systems. With global data expected to reach an astounding 175 zettabytes by 2025, recording every piece of metadata on a blockchain becomes nearly impossible. This issue also ties into privacy concerns, as handling such vast amounts of data increases the risk of exposure.

Privacy concerns add another layer of complexity. Many blockchain systems make sensitive information - such as usernames, email addresses, and file names - visible to all users. This openness clashes with regulations like the GDPR, which enforces the "right to be forgotten." Since blockchain data is immutable, altering or removing information is no simple task. Solutions like zero-knowledge proofs and off-chain storage, where only cryptographic hashes are stored on the blockchain, offer ways to verify content while safeguarding privacy. However, these solutions introduce additional technical challenges.

Technical complexity remains a barrier. Many media organizations and journalists lack the necessary expertise to implement blockchain-based verification tools. The failure of the Civil project highlights how technical and governance missteps can derail such efforts. This gap in knowledge is particularly concerning given that 74% of consumers now question the authenticity of photos or videos, even from reputable news outlets.

Regulatory frameworks are still catching up. Laws like the Digital Authenticity and Provenance Act 2025 now require organizations to disclose their digital content verification practices. Additionally, Gartner has identified digital provenance as one of the top 10 technology trends expected to reshape IT by 2030. This shift is driving interest in hybrid systems that combine AI’s detection strengths with blockchain’s secure record-keeping. For instance, companies like Truepic have moved away from purely blockchain-based models in favor of Public Key Infrastructure (PKI) systems, which improve efficiency while maintaining cryptographic security. Resolving these regulatory and technical issues is crucial for creating a secure and interoperable framework.

"The focus must remain on enhancing journalistic integrity and public trust to ensure that these technological advances benefit the field of journalism and, by extension, the democratic processes it supports."

– Malin Picha Edwardsson and Walid Al-Saqaf, Researchers

The adoption of open standards like C2PA offers a promising way to enable cross-platform compatibility. With AI-generated synthetic content projected to make up 90% of online content by 2026, addressing these issues has never been more critical. Future advancements will likely depend on balancing transparency with privacy, scaling infrastructure to handle enormous data volumes, and providing user-friendly AI tools for writing and blogging that don’t require advanced technical skills. Tackling these obstacles will help establish robust systems that ensure content authenticity across platforms.

Conclusion

The partnership of AI and blockchain offers a powerful shield against the growing tide of synthetic content. With 74.2% of newly published web pages now containing AI-generated material and deepfake cases escalating by an astonishing 900% between 2023 and 2025, ensuring trust in digital media has never been more urgent. Blockchain provides an immutable record of content origins, while AI detects tampering by analyzing intricate pixel-level anomalies.

Studies reveal that registry-based verification surpasses traditional reactive detection methods. By registering content at the moment of creation and embedding C2PA metadata linked to blockchain timestamps, organizations can produce legal-grade evidence for ownership disputes and DMCA takedowns. Unlike traditional metadata, which can fail during file transformations, watermarks anchored to an unchangeable ledger remain intact. This approach marks a critical step forward in creating a reliable verification ecosystem.

Experts highlight that adopting registry-based verification and open standards like C2PA reflects a maturing industry. As Nikhil John from InCyan explains:

"Blockchain anchored content provenance should be understood as infrastructure rather than as a stand alone product"

. This foundational infrastructure is becoming essential as U.S. fraud losses from AI-driven deception are expected to hit $40 billion by 2027.

To strengthen digital trust, a multi-layered security approach is key - combining perceptual hashing, invisible watermarking, and blockchain anchoring. These tools effectively address the weaknesses of traditional metadata while reducing the risks posed by AI-enabled fraud. With 45% of consumers willing to pay more for blockchain-verified products and 55% expressing discomfort with AI-generated media, provenance technology offers both protection and a competitive edge. The solutions are already here to build transparent, trustworthy content ecosystems , often leveraging advanced AI tools for blog content creation to maintain quality while ensuring provenance, ensuring authenticity remains a cornerstone of the digital era. This integrated strategy is a step toward safeguarding trust in an increasingly AI-driven world.

FAQs

How do AI and blockchain work together to prove content is real?

AI and blockchain work hand-in-hand to verify the authenticity of digital content by utilizing their individual capabilities. Blockchain ensures a secure, unchangeable record of content origin, including details like timestamps and ownership. Meanwhile, AI examines metadata and forensic markers to identify alterations or deepfakes. This combination creates a traceable chain of custody, allowing creators and organizations to confidently validate digital content's integrity while combating misinformation and unauthorized usage.

What’s the difference between perceptual hashing, watermarking, and blockchain signatures?

Perceptual hashing, watermarking, and blockchain signatures each offer unique approaches to verifying content authenticity and tracking its origin.

- Perceptual hashing generates a digital "fingerprint" from visual content. This allows for quick comparisons to identify similar or identical media, making it useful for detecting duplicates or altered versions.

- Watermarking embeds hidden signals directly into the media itself. These signals can serve as indicators of copyright ownership or as a tool for authentication, often remaining invisible to the casual viewer.

- Blockchain signatures rely on cryptographic methods to create attestations stored on a blockchain. This ensures a tamper-proof record of the content's origin and integrity, providing a transparent and secure way to verify ownership and provenance.

Each method caters to different needs, from copyright protection to ensuring content integrity.

How can provenance systems protect privacy and still meet U.S. regulations?

Provenance systems safeguard privacy while adhering to U.S. regulations by leveraging methods such as hardware-rooted authentication and cryptographic techniques to anonymize device identities. For instance, hardware-based certificates can confirm authenticity without disclosing personal details. Additionally, blockchain technology adds a tamper-resistant layer for recording content provenance, ensuring both transparency and traceability. This approach aligns with privacy laws while preserving user anonymity.