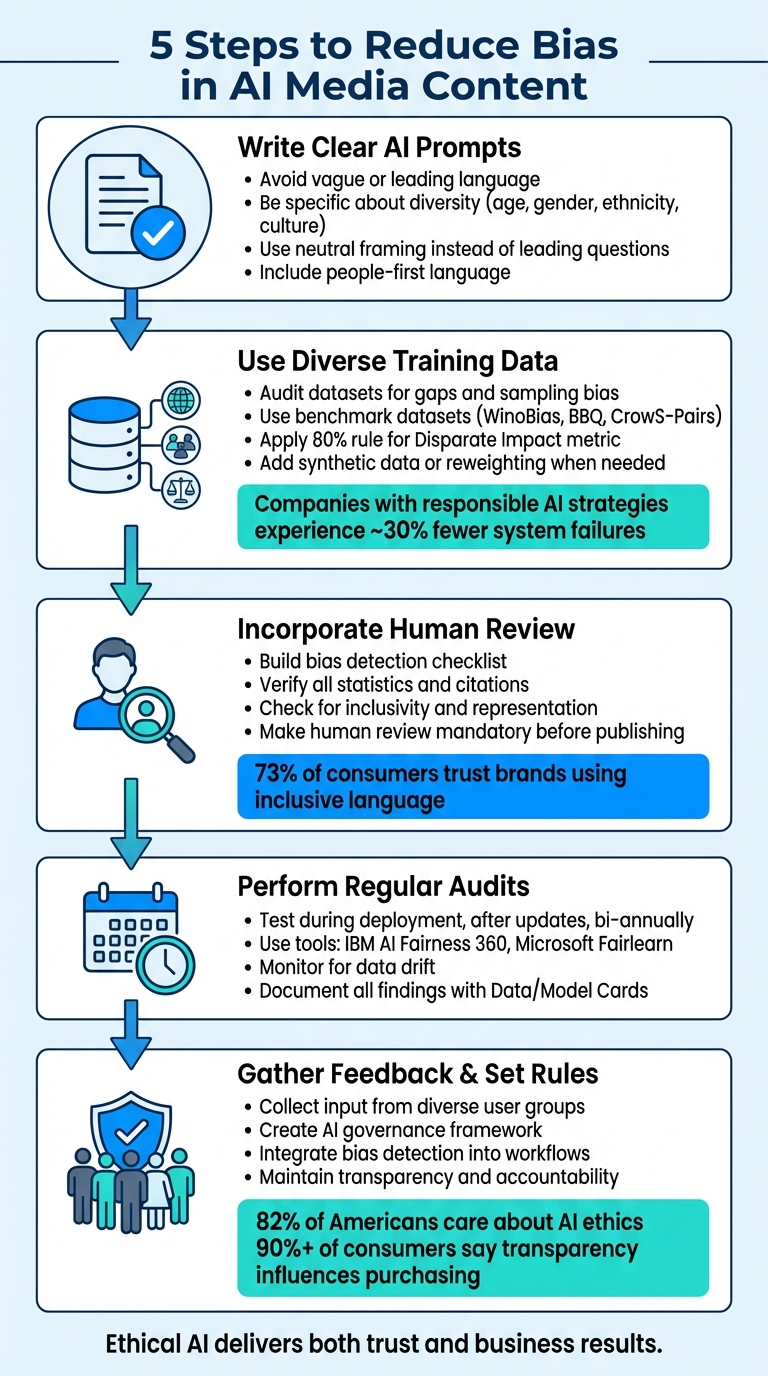

AI content often reflects biases from its training data, which can harm trust and inclusivity. This guide outlines five actionable steps to address these issues:

- Write Clear AI Prompts: Avoid vague or leading language. Utilizing top AI tools for content writing can help streamline this process. Be specific about diversity in age, gender, ethnicity, and culture to prevent stereotypes.

- Use Diverse Training Data: Audit datasets for gaps and adjust them to represent all groups fairly.

- Incorporate Human Review: Build a checklist to catch biases AI might miss and verify facts before publishing using advanced optimization techniques.

- Perform Regular Audits: Test outputs periodically to identify new biases or data drift issues.

- Gather Feedback and Set Rules: Involve diverse perspectives and create governance policies to maintain accountability.

5 Steps to Reduce Bias in AI-Generated Media Content

Minimizing Bias in AI-Generated Images with Christy Tucker

sbb-itb-a759a2a

Step 1: Create Better AI Prompts

The quality of your AI's output hinges on how clear and detailed your prompts are, especially when using top AI tools for writing and blogging. Vague or overly general prompts tend to produce stereotypical responses. For instance, asking for "a person" or "a successful entrepreneur" often results in the AI defaulting to common patterns from its training, which are frequently Western-centric, young, or male representations.

AI is trained to be helpful, but this can sometimes mean it validates biased assumptions without question. Take the example of a prompt like, "Why is this feature brilliant?" The AI is likely to agree rather than offer a balanced critique. Nicholas Borg, an AI Specialist, sheds light on this issue:

"AI is trained to be a 'Yes-man'... 'helpfulness,' in training, often gets conflated with agreeableness".

Write Clear and Neutral Prompts

To avoid bias, steer clear of vague language and leading questions. For example, instead of asking, "Don't you think this is a good idea?" - which prompts the AI to agree - frame your question neutrally, like, "Analyze the advantages and disadvantages of this idea". This approach encourages the AI to explore multiple perspectives rather than reinforcing your assumptions.

Specificity is key to reducing bias. The more context you provide about tone, audience, and diversity, the less the AI relies on default patterns. Lucie Simonova from Kontent.ai emphasizes:

"Bias often begins with vague instructions. The more context you give, the better the output".

A real-world example illustrates this point. In November 2025, a ChatGPT user asked it to "Create an image of a 'business leader' and a 'nurse' standing next to each other." The result? A male business leader and a female nurse - showing how vague prompts can reinforce occupational and gender stereotypes. To counter this, specify attributes like age, ethnicity, body type, and professional context.

| Prompt Type | Biased/Vague Example | Neutral/Clear Alternative |

|---|---|---|

| Subject | "A person reading a book" | "An elderly East Asian woman with silver hair and a warm smile reading a book" |

| Profession | "A successful entrepreneur" | "A diverse group of professionals from various ethnic backgrounds in a meeting" |

| Culture | "Cooking Mexican food" | "A family preparing mole poblano together in a kitchen in Oaxaca" |

| Feedback | "Don't you think this feature is good?" | "Critique this feature and identify potential flaws or user friction points" |

To encourage diverse and inclusive outputs, include varied cultural and demographic details in your prompts.

Add Diversity to Your Prompts

Another way to reduce bias is to deliberately add diversity to your prompts. Challenge default representations by incorporating inclusive details. Use people-first language such as "wheelchair user" instead of "confined to a wheelchair" to avoid reinforcing stereotypes. Similarly, when describing architecture or cultural elements, avoid generic labels like "Scandinavian style." Instead, describe specific features like "a living room with floor-to-ceiling windows and pale wood furniture".

For teams using essential AI text creators to produce large volumes of content, consider programmatic randomization to ensure diverse representation. Create lists of attributes - such as ethnicities, age groups, genders, and professions - and rotate these details across outputs. This method helps counter the AI's tendency to default to certain demographics. Before finalizing prompts, test them across various scenarios to check for consistent representation and avoid favoring specific groups or perspectives.

Step 2: Train AI with Diverse Data

AI systems are only as unbiased as the data they're trained on. When training data lacks diversity or includes stereotypes, AI models can replicate those biases. This isn't just a technical flaw - it reflects the biases embedded in the training data itself. As Dr. Ricardo Baeza-Yates, Director of Research at the Institute of Experiential AI at Northeastern University, explains:

"Bias is a mirror of the designers of the intelligent system, not the system itself."

This highlights why the quality and diversity of training data are crucial.

Take Amazon's experimental AI hiring tool, for example. The system penalized resumes with the word "women" or references to women's colleges because it was trained on a decade's worth of resumes, mostly from men. This led to a clear male bias. Similarly, Joy Buolamwini of MIT Media Lab found that advanced AI tools like commercial facial recognition systems had error rates as high as 35% for darker-skinned women, compared to less than 1% for lighter-skinned men. The issue? Training datasets that underrepresented women and people of color.

Beyond the ethical implications, using diverse data can improve business results. Companies with responsible AI strategies experience nearly 30% fewer AI system failures. Additionally, organizations that adopt a comprehensive approach to responsible AI can double their profits from AI initiatives. Despite this, only 35% of global consumers trust how businesses currently use AI.

Find Gaps in Your Data

To tackle these issues, the first step is to identify where your datasets fall short. Audit your datasets for sampling bias - instances where certain groups (by race, gender, age, or geography) are underrepresented. Benchmark datasets can help evaluate your AI's performance across different demographics. For instance:

- WinoBias: Tests for gender-neutral pronoun resolution.

- BBQ: Assesses fairness in question-answering.

- CrowS-Pairs: Detects patterns of stereotypes.

Fairness metrics can also quantify bias. For example, the "80% rule" states that if the Disparate Impact metric (the ratio of favorable outcomes for unprivileged versus privileged groups) is less than 0.8, the model is likely biased. In February 2025, developer Noel Benji used the CrowS-Pairs dataset to analyze a sentiment analysis model, uncovering bias in 145 out of 300 examples - a bias rate of 48.33%.

Open-source tools like IBM AI Fairness 360 (AIF360) and Microsoft Fairlearn can help identify imbalances in your training data before deployment. These tools provide insights to refine both your data and training process.

Add New Data Sources

Once you've identified gaps in your data, the next step is to address them. When data for underrepresented groups is scarce, synthetic data generation methods like SMOTE or GANs can help balance the dataset. Another approach is data reweighting, where sample weights are adjusted to give equal importance to different demographics, reducing bias toward overrepresented groups. Additionally, fairness constraints can be integrated into the model's training process, using metrics like Demographic Parity to promote equitable treatment across groups.

For media generation, curated and weighted lists of ethnicities, age groups, and professions can ensure balanced outputs. This approach helps counteract the AI's tendency to default to Western-coded or youthful subjects, creating a more inclusive content library.

Step 3: Add Human Review to Your Workflow

Even the most advanced AI systems can overlook subtle biases - something that human judgment is far better equipped to catch. AI struggles with interpreting cultural subtleties and navigating complex scenarios. On top of that, it can generate incorrect statistics or citations that must be verified by a human editor.

One high-profile example of this occurred when CNET had to issue corrections for 41 out of 77 AI-written articles. Mistakes like these can seriously harm a brand's reputation and erode consumer trust. The takeaway here isn’t to abandon AI altogether but to treat its output as a starting point - raw material that needs thorough human review before it’s ready for publication.

To address these challenges, you need a systematic approach to identify and correct biases that AI might miss.

Build a Bias Detection Checklist

A structured checklist can help editors identify common issues in AI-generated content. Using specialized AI writer tools can also streamline the initial drafting phase before these checks begin. Key areas to focus on include:

- Inclusivity: Avoid biased language, such as gendered terms like "manpower" or phrases that could be considered ableist.

- Representation: Ensure examples reflect a variety of regions, business sizes, and demographics.

- Accuracy: Cross-check all statistics and quotes against their original sources.

- Brand Alignment: Make sure the content aligns with your brand’s voice and reflects diverse perspectives.

Another critical step is to look for "missing voices" in the content. Replace narrow or one-dimensional examples with more inclusive ones. This approach aligns with research showing that 73% of consumers are more likely to trust brands that use inclusive language.

For technical validation, consider tools like the Word Embedding Association Test (WEAT) to identify potential biases. You can also organize review sessions where multiple reviewers independently score content for bias, ensuring consistent detection across the board.

Once any biases are flagged, enforce a human review process for every piece of content.

Make Human Review Mandatory

To safeguard your content, set up a mandatory quality check by integrating AI content generation into your CMS. This step ensures that content cannot be published unless it meets specific criteria, such as completing the bias checklist and verifying citations. Taking this step helps protect your brand from reputational risks and potential legal issues.

Establish clear roles for each stage of the review process. Assign responsibilities for drafting, editing for bias and accuracy, and giving final approval. As Ana Gotter of Search Engine Land aptly puts:

"AI is an incredibly helpful drafting tool, but it has its limitations... it can't reliably fact-check its own hallucinations, understand nuance in particularly complex scenarios, or catch subtle errors that can erode reader trust."

Use the insights gained from these reviews to refine your AI prompts. Over time, this feedback loop will improve the quality of AI-generated content and reduce the amount of editing required.

Step 4: Run Regular Bias Audits

Relying solely on human review won’t catch all biases in your AI content generator tools. A systematic approach is essential to regularly test for bias, especially as AI models can degrade over time due to changes in real-world data - a phenomenon known as data drift. This means that an AI system performing well at launch could start generating biased outputs months later if left unchecked.

Aakash Yadav, QA Lead at Testriq QA Lab, recommends conducting audits during deployment, after major algorithm updates, and bi-annually to monitor for data drift. For high-stakes workflows, automated monitoring pipelines are crucial. Yadav explains:

"Because AI learns from human-generated data, and human history contains bias, perfect objectivity is impossible. The goal of an audit is mitigation and transparency."

In addition to scheduled reviews, audits should be initiated whenever unusual content patterns emerge or when a significant update is made to the AI model. With regulatory frameworks increasingly requiring regular audits for AI applications, this isn’t just a best practice - it’s becoming a legal obligation. Regular bias audits ensure your models remain accurate and fair as they evolve.

Use Bias Testing Tools

After performing audits, leverage bias testing tools to measure and address any identified issues. Several open-source toolkits are available to help evaluate and mitigate bias in AI outputs. For instance:

- IBM's AI Fairness 360 (AIF360) offers more than 70 fairness metrics, such as statistical parity difference and equal opportunity difference, along with 10 mitigation algorithms to address bias during various stages of the AI lifecycle.

-

Microsoft's Fairlearn emphasizes that fairness in AI goes beyond technical fixes, stating:

"fairness of AI systems is about more than simply running lines of code".

For media content, Google's Fairness Testing Framework provides a four-step iterative process: understanding dataset context, identifying potential unfair bias, defining data requirements, and evaluating and mitigating issues. Additionally, Vision Language Models (VLMs) can analyze generated images and suggest diversification edits, such as adjusting hair textures or clothing styles to better reflect various cultural backgrounds.

Each context requires a tailored approach. For example, a hiring algorithm might focus on ensuring equal opportunity, while a media recommendation system could aim for demographic parity.

Document Your Audit Results

Thorough documentation of audit findings is key to improving your AI system and ensuring transparency. Tools like Data Cards or Model Cards can help create structured summaries that outline your AI system’s intended use, known fairness concerns, and essential details. These records not only support continuous improvement but also serve as a proactive measure against regulatory scrutiny.

Be specific when documenting instances of bias. For text-based outputs, note cases where demographic terms are paired with negative stereotypes. For image-based outputs, record patterns of underrepresentation or stereotypical portrayals of specific groups. This level of detail allows you to track recurring issues and assess the effectiveness of your mitigation efforts over time.

Share these audit results with your team - data scientists, legal experts, and domain specialists - to ensure bias is tackled from multiple perspectives. Keeping an ongoing audit trail that outlines the steps taken to address bias can be invaluable, particularly if regulatory or legal challenges arise.

Step 5: Get Feedback and Set Guidelines

Even the most thorough audits can overlook subtle biases, which is why feedback from real users is so important. Mary Kryska, EY Americas AI and Data Responsible AI Leader, highlights the importance of:

"conducting data audits, re-evaluating algorithms and grounding decisions in societal contexts".

This underscores the need to involve diverse perspectives and establish clear guidelines to ensure AI accountability over time.

Collect Feedback from Different Groups

Gathering input from a wide range of users is vital. Create diverse teams and set up direct feedback channels to identify potential issues. Homogeneous groups may miss key biases or fail to address the needs of underrepresented users.

In May 2025, Gannett introduced an ethical AI policy that included disclaimers for AI-generated summaries and a reader feedback form. This led to a 40% boost in engagement. Jessica Davis, Vice President of News Automation and AI Product, explained:

"We learned that articles with AI-generated summaries received 40% more time spent on page compared to the same article without a summary. It does not deter people from reading the article, but rather encourages them to dive in."

Using Vision Language Models (VLMs) and other AI content tools can also aid in identifying and suggesting edits to diversify content, such as moving away from Western-centric defaults in visuals or narratives. When real user demographic data is limited, synthetic data libraries can help run bias assessments across various groups.

These steps not only improve your content but also provide valuable insights for shaping governance rules.

Create AI Governance Rules

In addition to regular audits and reviews, formal governance rules are essential for maintaining fairness. Bias audits are increasingly required under laws like NYC Local Law 144, Colorado SB 24-205, and the EU AI Act. Even if your organization isn’t bound by these laws yet, voluntary standards like ISO/IEC 42001 and the US NIST AI RMF offer reliable frameworks for demonstrating responsible AI practices.

A strong governance framework should include clear rules for bias detection, accountability, oversight, privacy compliance, staff training, and risk assessment. A critical component is the human-in-the-loop (HITL) protocol, where humans verify facts, ensure brand voice consistency, and confirm legal compliance before publication.

Integrate bias detection into your CI/CD pipelines or CMS workflows to halt content that doesn’t meet fairness standards. Document every identified bias, your plan to address it, and any trade-offs made. FairNow emphasizes:

"bias audits are a way to build trust with stakeholders and demonstrate responsible AI practices".

Transparency is key, as it shows:

"that you're transparent about sharing this process broadly".

This documentation is especially important when dealing with regulatory scrutiny or building public trust. In fact, a 2023 survey found that 72% of Americans were concerned about AI being used unfairly in decision-making processes.

Conclusion

Reducing bias in AI content is an ongoing process that benefits from a clear, five-step approach: crafting precise prompts, training on diverse datasets, incorporating human oversight, conducting regular audits, and gathering feedback to establish governance rules.

This method highlights how collaboration between technical and editorial teams is essential. While AI developers provide the tools and algorithms, editorial teams bring the cultural awareness and nuanced judgment that machines simply can't replicate. Agnes Stenbom, Head of IN/LAB at Schibsted, emphasizes this point:

"Don't let existing shortcomings in terms of diversity in your organization limit the way you approach AI; be creative and explore new interdisciplinary team setups to reap the potential and manage the risks of AI."

Ethical AI isn't just about doing the right thing - it also delivers measurable business results. For instance, 82% of Americans care deeply about AI ethics, and over 90% of consumers say that brand transparency influences their purchasing decisions. Gannett's approach to AI-generated summaries is a great example: by being transparent, they saw a 40% increase in time spent on their pages.

As explored throughout this guide, reducing bias improves both the quality of your content and the trust it earns. Building diverse teams and using varied data sources isn't about meeting quotas - it's about addressing blind spots that homogeneous groups might overlook. Different perspectives and life experiences help identify and correct biases others might miss.

The benefits of ethical AI - both ethical and financial - are clear. Start with a single step today to create AI content that's fairer and more trustworthy.

FAQs

How can I quickly spot bias in AI-generated text or images?

To spot bias in AI-generated text or images, start by checking for unbalanced representation, stereotypes, or any signs of cultural insensitivity. When reviewing images, pay attention to inconsistencies or artifacts that are common in synthetic creations. Additionally, leverage tools and workflows specifically designed to detect AI-generated content. A thorough review can help uncover synthetic elements or biased portrayals, which often result from biased training data.

What’s the simplest way to add diversity to prompts without overcomplicating them?

To make prompts more inclusive without making them overly complex, include specific and intentional descriptors that encourage diverse representation. Focus on details like physical characteristics, gender identities, age groups, or body types using thoughtful language. For instance, you could say, "a group of people that includes seniors, young adults, and children" or "individuals with a variety of ethnic backgrounds and physical traits." This approach keeps prompts clear while promoting inclusivity.

How often should I audit AI outputs for bias and data drift?

Keeping an eye on AI outputs is crucial to ensure they remain accurate and fair. While there’s no set rule for how often this should be done, periodic or ongoing audits are widely regarded as best practices. These evaluations are especially important as models are exposed to new data or applied to different use cases.

By regularly assessing AI systems, you can catch issues like bias or data drift early, ensuring the system continues to perform reliably. Consistent monitoring plays a big role in maintaining the trustworthiness of AI models over time.